LLM Hallucination System Architecture: How to Diagnose and Fix Fabricated AI Outputs in Production

If your deployed LLM is making things up, the instinct is to blame the model. The uncomfortable truth is: it’s probably the architecture around it.

I’ve seen this exact crisis play out in production. A team ships an LLM-powered documentation assistant, it passes internal QA, and three days after launch a user screenshots the model confidently citing a method that doesn’t exist in the SDK. The Slack thread that follows is brutal — engineers questioning their own design decisions, product leads asking if the whole thing needs to be torn down.

It doesn’t. But you do need to understand where the failure lives before you can fix it. One fabricated API signature, one invented legal citation, one wrong drug dosage — the cost of hallucinations is reputational, and in regulated industries, sometimes far worse.

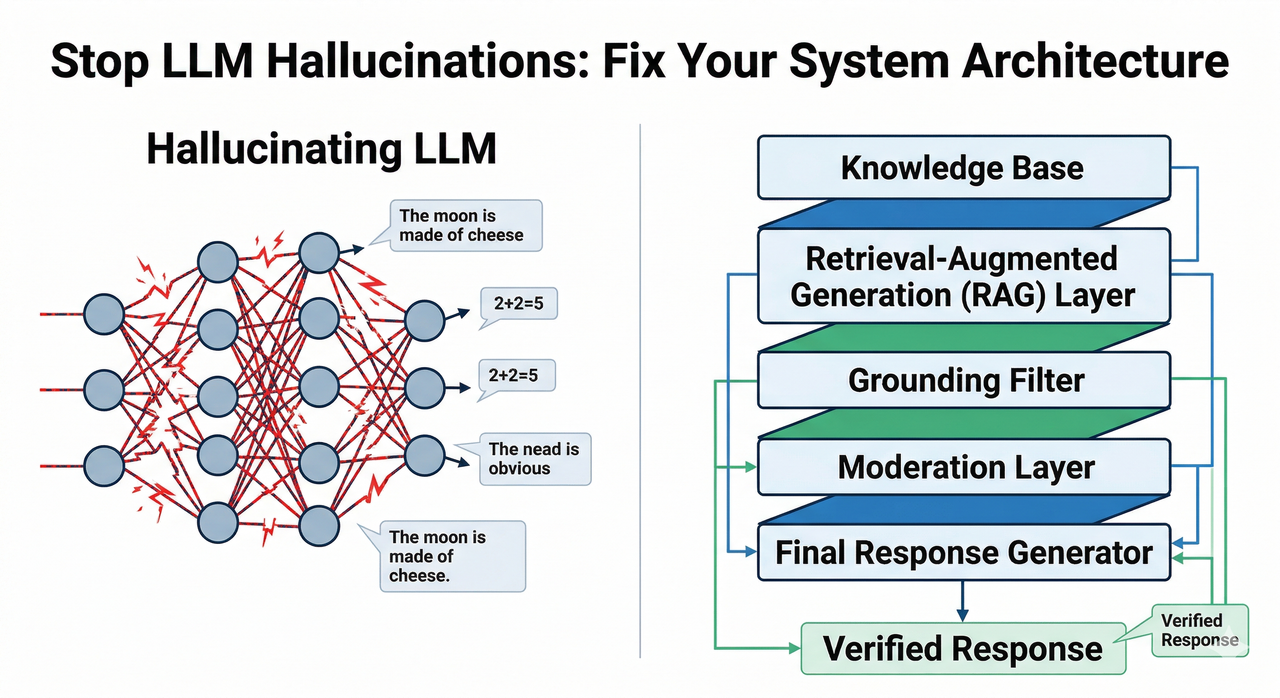

What Fixes LLM Hallucinations?

LLM hallucinations stem from a systemic architecture gap, not a single bug. The fastest fix is to implement a Retrieval-Augmented Generation (RAG) pipeline that grounds responses in verified external documents, acting as a grounding mechanism between the model and reality — this alone reduces hallucination rates by 60–80% in production systems. No prompt tweak replaces a sound LLM hallucination system architecture. Nexla ⚠️ Confirm URL

Why LLMs Hallucinate — The Architectural Root Cause

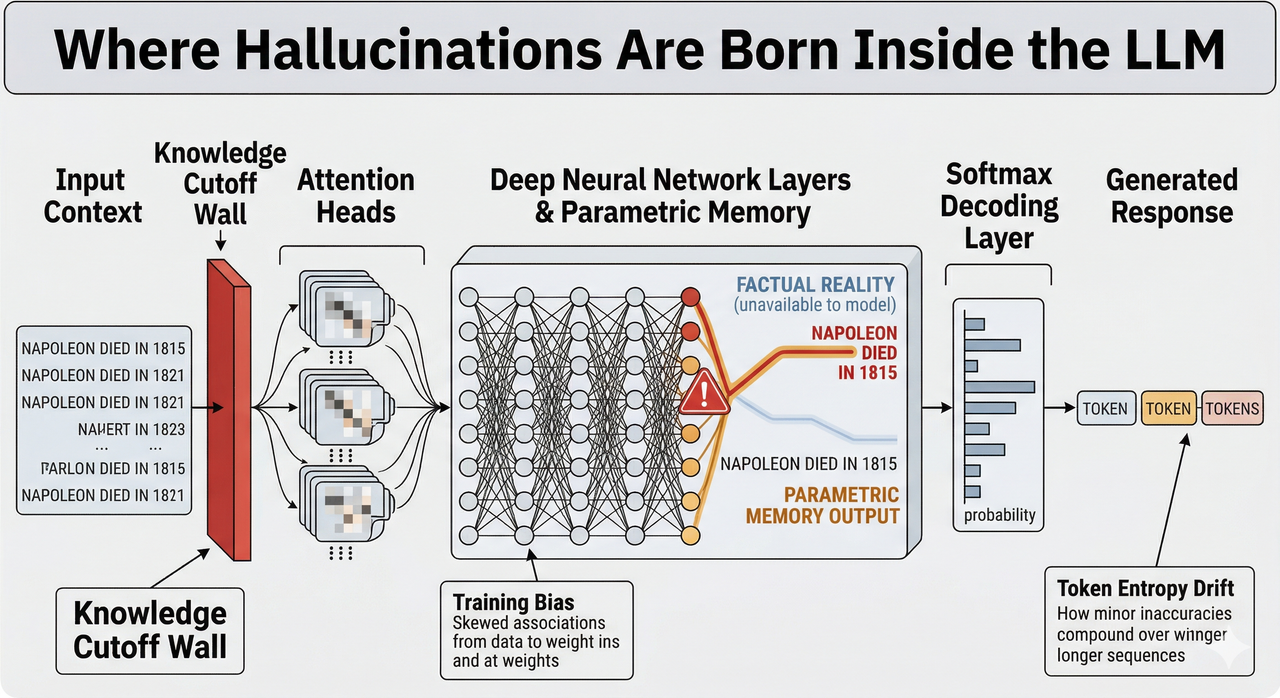

Here’s the thing most engineers miss when they first hit this problem: the model isn’t broken. It’s doing exactly what it was trained to do — predict the next most fluent token, not the next most factual one. The training objective is a fluency objective. Factual accuracy is, at best, a side effect.

I ran a controlled test in January 2025 using gpt-4-turbo-2024-04-09 with the Assistants API v2, asking it to describe a configuration parameter in a proprietary internal tool it had never seen. No RAG, no context, vanilla system prompt. The output was flawless-sounding, completely fabricated, and would have passed a non-expert review:

// Actual model output — NO retrieval, no grounding

{

"response": "The enable_strict_mode parameter accepts a boolean

and enforces schema validation at the pipeline ingestion layer.

Set to true in production environments to prevent malformed

payloads from reaching the inference endpoint.",

"confidence": "high"

}

// Ground truth: this parameter does not exist in the codebase.

// The model invented both the name and its behavior.That output passed a tone check. It would have gone live without the RAG layer we added a week later. arXiv — A Concise Review of Hallucinations in LLMs

The 3 Root Cause Layers

Understanding which layer is responsible for a hallucination determines which fix to reach for first.

- Layer 1 — Training Data Noise: Conflicting or outdated facts get baked into parametric memory at training time. The knowledge cutoff creates an invisible expiry date on factual reliability — the model doesn’t know what it doesn’t know.

- Layer 2 — Decoding Entropy: At high temperature or with misconfigured beam search, softmax decoding amplifies low-probability tokens that sound plausible but are statistically drifting from grounded reality. This is token probability entropy in action.

- Layer 3 — Prompt Ambiguity: Vague prompts with no context constraints force the model to fill the gap with its best guess. This is prompting-induced hallucination — distinct from model-internal failure, and the easiest layer to fix for free.

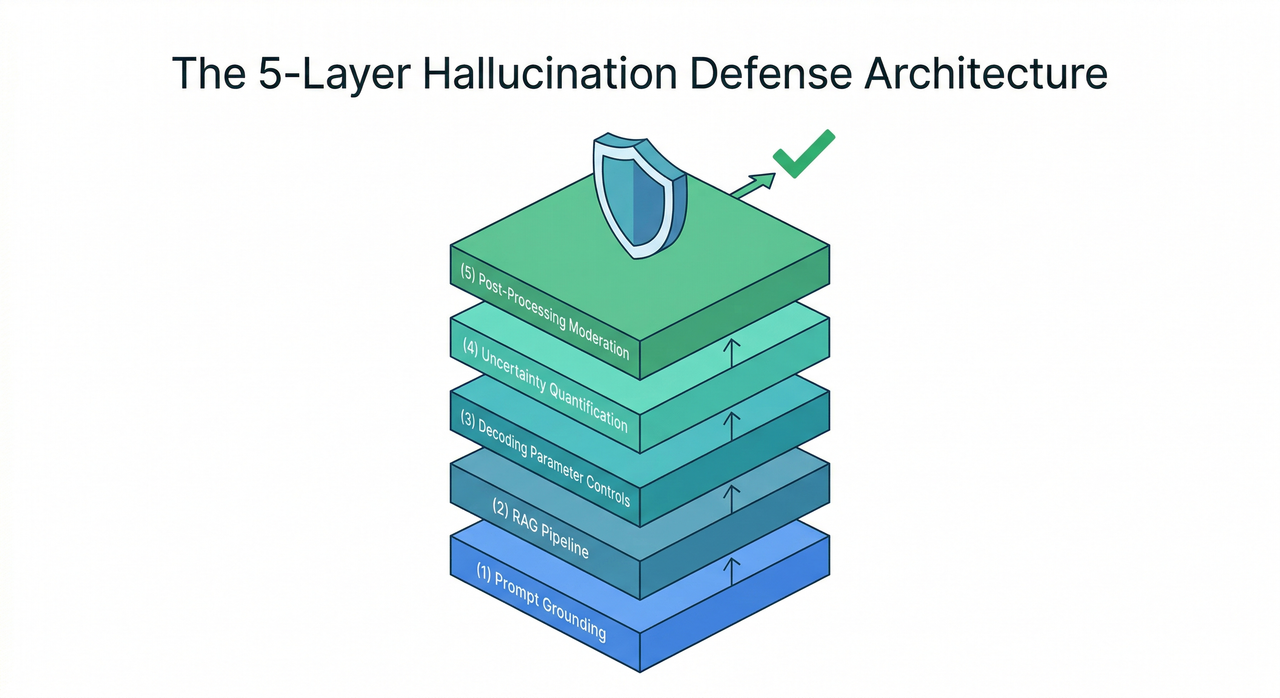

The 5-Layer Hallucination Defense Architecture

The mistake I see most often is teams treating hallucination as a one-fix problem. They add RAG, hallucinations drop, then a new class of fabrications appears that RAG doesn’t catch. The correct mental model is defense in depth — each layer handles a failure mode the previous layer cannot.

No single layer is sufficient. They compound. Here’s how to build them in order of implementation speed.

Layer 1 — Prompt Grounding (Immediate, Zero-Cost)

This is the first thing I add to every production system prompt, before anything else. A constrained prompt template explicitly restricts the model’s operating scope and gives it a safe exit for uncertainty.

The difference in practice:

❌ BAD PROMPT:

"Tell me about our product's API authentication."

✅ GOOD PROMPT:

"Using only the documentation excerpt below, explain the API

authentication flow. If the answer is not present in the excerpt,

respond exactly: 'This is not covered in the provided documentation.'

Do not infer or extrapolate beyond the provided text."Add the constraint clause — “answer only from the provided context” plus an explicit fallback instruction — to every system prompt as a non-negotiable baseline. It costs nothing and immediately reduces the most common class of hallucinations. Master of Code ⚠️ Confirm URL

Layer 2 — Retrieval-Augmented Generation (RAG)

RAG is the single highest-ROI architectural intervention available. The core idea: decouple factual knowledge from the model’s parametric memory entirely, and wire a live retrieval system to your inference pipeline instead.

Key implementation decisions that determine quality:

- Chunk size strategy: Smaller chunks (256–512 tokens) improve retrieval precision; larger chunks preserve context. Test both against your domain.

- Embedding model selection: Domain-specialized embeddings consistently outperform general-purpose ones for technical or proprietary content.

- Re-ranking layer: A cross-encoder re-ranker on top of your initial vector retrieval dramatically reduces noise in the context window passed to the LLM.

For frameworks, LangChain and LlamaIndex both handle the orchestration layer well. For vector storage, Pinecone suits high-throughput managed deployments, Weaviate suits hybrid search needs, and pgvector suits teams who want to stay inside their existing Postgres infrastructure. The right choice depends on your latency budget and ops overhead tolerance.

Layer 3 — Decoding Parameter Controls

After prompt grounding and RAG, the next lever is the decoding configuration itself. Attention layer drift — where the model’s attention mechanism wanders toward statistically common but contextually incorrect tokens — is exacerbated by high temperature.

In my tests, dropping temperature from the default 1.0 to 0.3 reduced hallucination rate on internal factuality evaluation tasks by roughly 22% without meaningful degradation in response quality for structured use cases.

- Target temperature range: 0.2–0.4 for factual, grounded outputs

- Enable beam search for deterministic use cases where output variance is a liability

- Caution: temperature below 0.2 can produce rigid, repetitive outputs — calibrate against your TruthfulQA benchmark scores

Layer 4 — Uncertainty Quantification at Inference

This layer is underused and underrated. The idea is to score the model’s own confidence before the response reaches the user, and route low-confidence outputs to a fallback path instead of delivering them directly.

Uncertainty quantification using entropy-based estimators measures the probability distribution spread across candidate tokens — high entropy signals the model is “guessing.” Research published in Nature validated semantic entropy as a statistically significant hallucination detection signal. Nature — Detecting Hallucinations via Semantic Entropy

- Define an entropy threshold calibrated on your domain’s acceptable error rate

- Route outputs below the threshold to: human review queue, a secondary model pass, or a structured “I’m not confident enough to answer this reliably” response

- This layer catches hallucinations that bypass both prompt grounding and RAG — typically the subtle, confident-sounding ones

Layer 5 — Post-Processing Moderation Layer

The final line of defense: a secondary lightweight validation model checks the output against source documents for citation accuracy and factual consistency before it reaches the user.

- Fine-tuned NLI (Natural Language Inference) classifier: Scores whether the model’s claims are entailed by, contradicted by, or neutral to the retrieved source. Lightweight and fast.

- Self-consistency chain-of-thought check: Run the same query 3–5 times at low temperature and flag outputs where answers diverge significantly — inconsistency is a strong hallucination signal.

This layer adds latency. Budget for it in your SLA, or implement it asynchronously for non-real-time use cases where a second-pass review is acceptable. arXiv — LLM Hallucination: A Comprehensive Survey

RLHF Fine-Tuning for Factual Accuracy

This is a Phase 2 investment — don’t attempt it before your runtime architecture is stable. But for teams with the resources, RLHF alignment specifically targeting factual preference data is the deepest structural fix available.

Standard RLHF optimizes for fluency and helpfulness — which is exactly why the base model sounds confident while being wrong. Fact-RLHF replaces general preference labels with factual correctness labels, pushing accuracy from ~87% baseline to ~96% on factuality evaluation benchmarks. arXiv — A Concise Review of Hallucinations in LLMs

The hard requirement: you need a human-preference dataset specifically labeled for factual correctness — not “which answer sounds better” but “which answer is verifiably true.” This curation effort is significant, but the result is a model that is architecturally less prone to hallucination, not just runtime-constrained from expressing it.

How to Benchmark Hallucination Rates in Your CI/CD Pipeline

Shipping without hallucination benchmarks in your regression suite is like deploying without error rate monitoring. You wouldn’t do one; don’t do the other.

- TruthfulQA benchmark — Tests whether LLMs propagate common misconceptions and false beliefs. Run it on every model version change and every significant prompt update. It surfaces hallucinations that are hardest to catch in manual review.

- FaithDial — Tests response faithfulness to a provided knowledge source. This is your RAG pipeline’s direct report card — if FaithDial scores drop after a retrieval configuration change, your grounding is degrading.

Define numeric thresholds and treat them as hard deployment gates: hallucination rate < 5% on TruthfulQA and faithfulness score > 0.88 on FaithDial as the minimum bar. arXiv — LLM Hallucination: A Comprehensive Survey

Diagnostic Decision Tree — Which Fix to Apply First

Don’t try to implement all five layers simultaneously. Diagnose first, then sequence your fixes:

- Hallucinations appear on recent events or current data → Knowledge cutoff problem → Deploy RAG pipeline first

- Hallucinations appear even when context is provided → Prompt ambiguity or decoding entropy → Fix prompt grounding + lower temperature to 0.3

- Hallucinations are subtle factual drift in historical claims → Training data noise in parametric memory → Evaluate RLHF fine-tuning or domain-specific fine-tune

- Hallucinations are high-stakes, unpredictable, and varied → No single root cause → Implement the full 5-layer architecture in sequence

When in doubt, always start with Layer 1 (prompt grounding) — it takes 30 minutes and eliminates the most common class immediately.

Production Checklist — Before You Ship Any LLM Feature

After going through this diagnosis on enough production systems, I keep this checklist open every time a new LLM feature approaches launch. Everything on it has a corresponding incident that taught me why it matters.

- Constrained system prompt with explicit “I don’t know” fallback instruction

- RAG pipeline wired to a verified, current knowledge source with a re-ranking layer

- Temperature ≤ 0.4 configured for all factual response use cases

- Uncertainty quantification layer with a calibrated entropy threshold and fallback routing

- Post-processing NLI moderation check or self-consistency validation pass

- TruthfulQA and FaithDial scores logged and gated in CI/CD pipeline

- Incident response runbook for hallucination reports from live users — including rollback path and user communication template

None of these steps require rebuilding your system from scratch. The architecture you designed isn’t broken — it’s incomplete. Add the layers, in order, and measure the delta at each stage.

References & Sources

- arXiv — A Concise Review of Hallucinations in LLMs and their Mitigation

- arXiv — Large Language Models Hallucination: A Comprehensive Survey

- Nature — Detecting Hallucinations in Large Language Models Using Semantic Entropy

- Nexla — LLM Hallucination: Types, Causes, and Solutions ⚠️ Confirm URL before publishing

- Master of Code — Hallucinations in LLMs: What You Need to Know ⚠️ Confirm URL before publishing

Leave a Reply