Best Open Source LLMs for Prompt Adherence (2026 Fix Guide)

Before you pay for GPT-4o, read this. The problem is almost never your prompt engineering skill — it’s a fixable mismatch between your model checkpoint, prompt template, and constraint specificity. After 33 years in IT and the last three deep in LLM deployments, I can tell you: open source LLM best prompt adherence failures have a root cause pattern. It repeats across every stack I’ve touched.

Definition: Open source LLM prompt adherence is the degree to which a self-hosted language model reliably executes all explicit instructions in a prompt — including format rules, length constraints, output structure, and content exclusions — without deviation. For example: a model with high prompt adherence will return exactly 3 bullet points when told to, every time, without hallucinating extra content or dropping required sections.

As of April 2026, DeepSeek R1 leads open-weight models on instruction following with an 87.75% score on the Scale AI SEAL leaderboard, followed by Llama 3.1 405B Instruct at 84.85%. For output format control in JSON, Qwen 2.5 hits 99.2% schema adherence in native structured output mode. Scale AI SEAL These are not marketing claims — they are hard benchmark numbers, and they matter when your pipeline is breaking at 3 AM.

Which Open Source LLM Has the Best Prompt Adherence in 2026?

Quick Answer

As of 2026, DeepSeek R1 leads open-source models on instruction following with an 87.75% score on the Scale AI SEAL benchmark, followed by Llama 3.1 405B Instruct (84.85%) and Mistral Large 2 (82.81%). For JSON-structured output, Qwen 2.5 achieves 99.2% schema adherence — the highest among open-weight models. Scale AI SEAL

No single model wins every scenario. DeepSeek R1 is your strongest general-purpose pick for hard-constraint compliance in reasoning-heavy pipelines. Qwen 2.5 dominates structured output tasks when you can force JSON mode. Llama 3.1 405B Instruct sits in a solid middle ground for general system prompt compliance without the overhead of DeepSeek’s reasoning chain. The right choice depends on your constraint type — not hype.

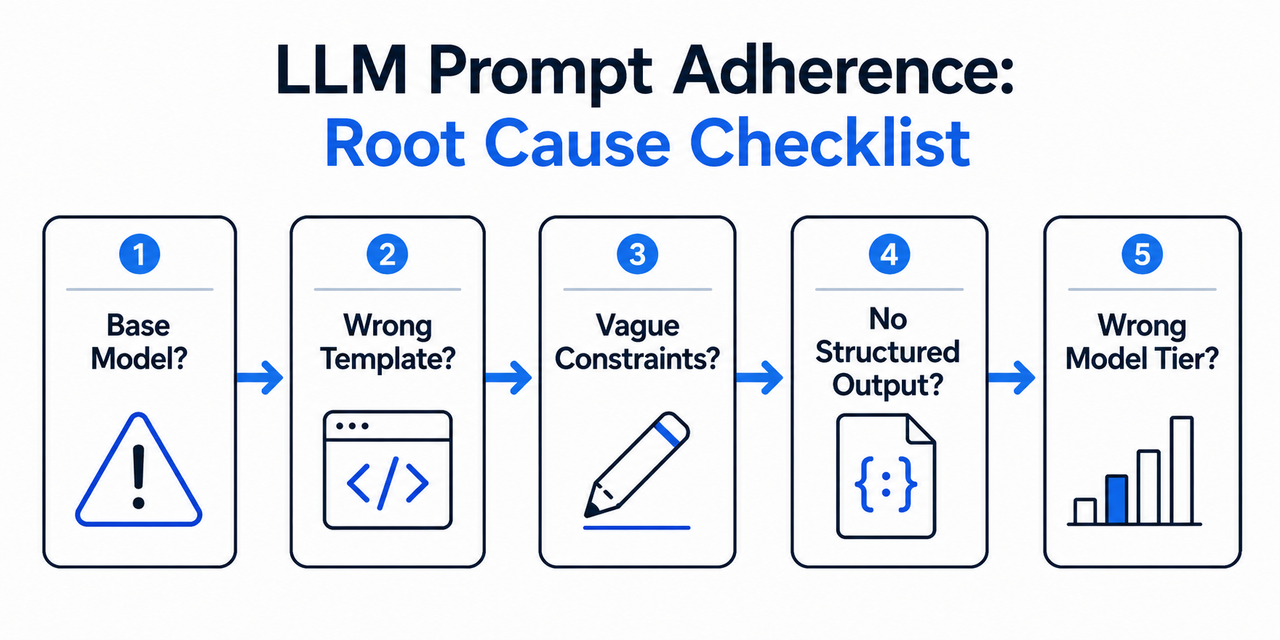

Why Is Your Open Source LLM Ignoring Your Prompts?

I’ve seen this exact frustration dozens of times in production reviews and community threads. The developer is technically solid. The prompt looks reasonable. But the model keeps skipping sections, blowing past word limits, or returning freeform text when JSON was explicitly requested. In almost every case, the fault isn’t the developer. It’s one of three fixable root causes.

Root Cause #1 — You’re Using a Base Model, Not an Instruct Variant

This is the most common and most embarrassing mistake — and I’ve made it myself early on. A base model like Llama-4 is a completion engine. It was trained to predict the next token, not to follow instructions. It has zero RLHF alignment or instruct fine-tuning. Asking it to follow a system prompt is like asking a calculator to take notes.

The data backs this up hard: in a clinical deployment benchmark, Llama-3.3-70B without structured prompting stalled at 66.7% guideline adherence and produced unsafe recommendations 33.3% of the time. Switch to the -Instruct checkpoint with structured prompts — adherence jumped to 100%. Same weights class, completely different behavior. PMC/NIH

How to check fast: On Hugging Face, look for the tag Text Generation (Instruction Tuned) or verify the model name ends in -Instruct, -Chat, or -it. If it doesn’t have one of those suffixes, don’t use it in a task pipeline. HuggingFace

Root Cause #2 — Mismatched Prompt Template

Every instruct-tuned model was trained with a specific conversation template. Deviate from it and you trigger what researchers call “instruction escape” — the model partially drops out of instruction-following mode and reverts to raw completion behavior. Mistral models are the most documented example. They require strict [INST]...[/INST] delimiters. When those delimiters are missing or malformed, an empirical study found: arXiv

UNKNOWN rate of 4.7–7.2% across all datasets when prompts do not

conform to [INST]...[/INST] format, suggesting partial instruction

alignment escape.That 4.7–7.2% failure rate sounds small until it’s your JSON parser throwing exceptions in production. Here are the three most common native templates you need to match exactly:

| Model Family | System Block | Turn Delimiter |

|---|---|---|

| Mistral | (not standard — use [INST] only) | [INST] ... [/INST] |

| Llama 3.x | <|system|> | <|user|> / <|assistant|> |

| Qwen 2.5 | <|im_start|>system | <|im_start|>user |

Always pull the official chat template from the model card. Never assume it matches another model’s format, even within the same family. Mistral on HuggingFace

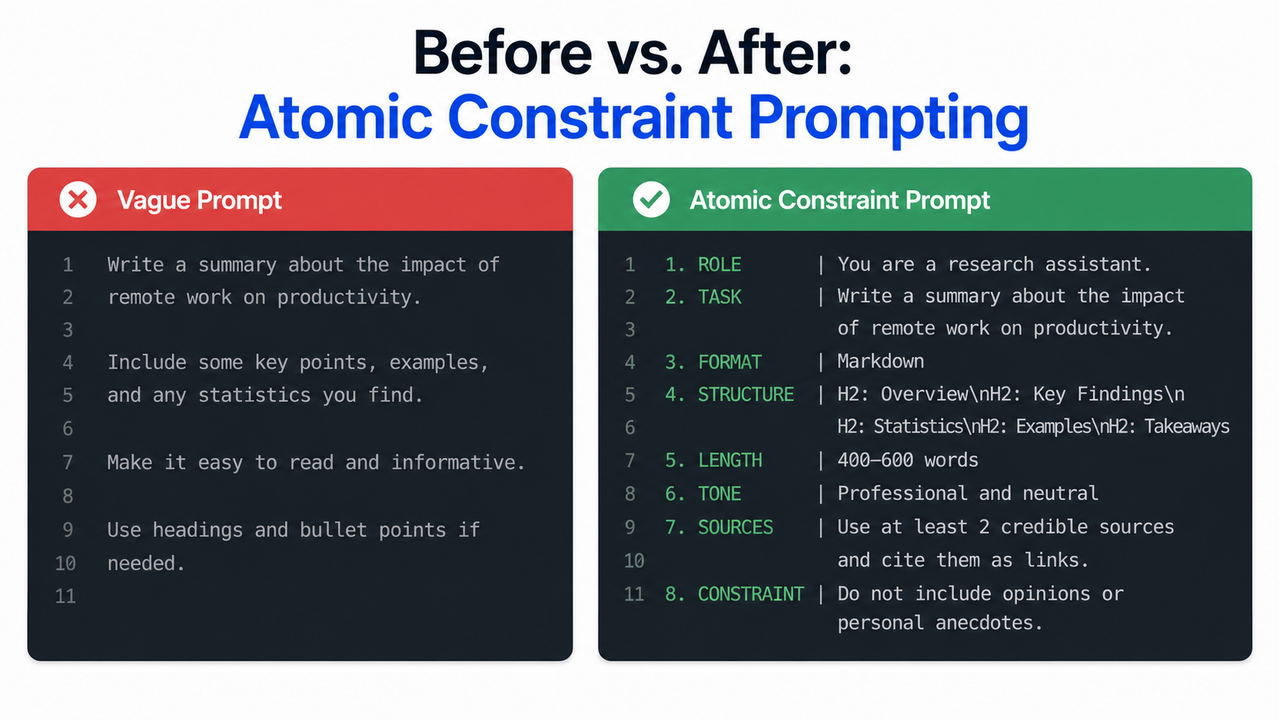

Root Cause #3 — Vague, Non-Verifiable Constraints

The third root cause is purely prompt-side. Instructions like “be concise,” “keep it short,” or “write professionally” are not machine-parseable. There is no token sequence in the model’s training distribution that maps “concise” to a specific behavior — because humans use that word to mean 10 different things.

The IFEval benchmark makes this concrete: it only counts instructions that are programmatically verifiable — “include the word X,” “respond in exactly N sentences,” “do not use bullet points.” Vague qualitative instructions are not even tested, because they can’t be reliably evaluated. If IFEval can’t verify it, your model probably can’t reliably execute it. Scale AI SEAL

How to Fix Open Source LLM Best Prompt Adherence: 7 Steps

These fixes are ordered by effort. Start at Step 1 before you consider touching model weights or spending on upgrades. In my experience, 80% of adherence failures resolve by Step 3.

Step 1 — Always Load the -Instruct or -Chat Checkpoint

On Hugging Face, go to the model’s page and filter by task = Text Generation (Instruction Tuned). Confirm the checkpoint name includes -Instruct, -Chat, or -it. Bookmark the official model card — it will also contain the correct prompt template for Step 2. HuggingFace

- ✅

meta-llama/Llama-3.1-405B-Instruct - ✅

deepseek-ai/DeepSeek-R1 - ✅

Qwen/Qwen2.5-72B-Instruct - ❌

meta-llama/Llama-3.1-405B(base — no instruction alignment)

Step 2 — Copy the Model’s Exact Native Prompt Template

Do not write prompt templates from memory. Pull them from the official model card. The difference between a working and broken Mistral prompt is often just missing bracket syntax. Using the wrong delimiter is the single fastest way to introduce the 4.7–7.2% silent failure rate documented in the Mistral benchmark data. arXiv

# Mistral [INST] format — correct

prompt = "[INST] You are a helpful assistant. Respond in exactly 3 bullet points. [/INST]"

Llama 3.x format — correct

prompt = "<|system|>You are a helpful assistant.<|user|>Respond in exactly 3 bullet points.<|assistant|>"

Qwen 2.5 format — correct

prompt = "<|im_start|>system\nYou are a helpful assistant.<|im_end|>\n<|im_start|>user\nRespond in exactly 3 bullet points.<|im_end|>\n<|im_start|>assistant\n"Step 3 — Replace Vague Instructions with Atomic Constraints

This is the highest-ROI step for most developers. Every vague instruction in your prompt is a probability sink. Replace each one with a constraint that has exactly one interpretation. The principle: if a constraint can be evaluated by a script, it’s atomic. If it requires human judgment to verify, rewrite it until it doesn’t. Palantir

| ❌ Vague Instruction | ✅ Atomic Constraint |

|---|---|

| “Be concise” | “Respond in exactly 3 bullet points, each under 15 words” |

| “Write a short description” | “Write exactly 2 sentences. No word ‘amazing’.” |

| “Keep it professional” | “Use formal English. No contractions. No emojis.” |

| “Summarize this” | “Output a 3-sentence summary. Sentence 1: main claim. Sentence 2: key evidence. Sentence 3: conclusion.” |

| “Respond helpfully” | “Answer the question directly in the first sentence. Then provide one example.” |

Step 4 — Force Structured Output (JSON Mode) Where Possible

If your pipeline requires structured data, stop relying on free-text instruction following entirely. Both Qwen 2.5 and DeepSeek V3/V4 expose native JSON mode via the response_format parameter. In this mode, Qwen 2.5 achieves 99.2% JSON schema adherence — essentially eliminating the format-compliance failure class for structured outputs. Use this whenever your downstream consumer is a parser, not a human. Scale AI SEAL

response = client.chat.completions.create(

model="qwen2.5-72b-instruct",

messages=[{"role": "user", "content": your_prompt}],

response_format={"type": "json_object"}

)Step 5 — Run IFEval to Diagnose Which Instruction Types Fail

Before you change anything else, benchmark your specific model on your specific instruction types. Guessing is expensive. IFEval tells you exactly which constraint categories are failing — keyword constraints, format constraints, length constraints, positional constraints. This is how I approach every new model deployment: benchmark first, optimize second. Scale AI SEAL

pip install lm-eval

lm_eval --tasks ifeval --model hf

--model_args pretrained=your-org/your-model

--output_path ./ifeval_resultsStep 6 — Apply Activation Steering for Persistent Violations

If Steps 1–5 don’t resolve your adherence problem, you’re dealing with a model-level alignment gap that prompt engineering alone can’t patch. This is where activation steering becomes the right tool. Microsoft’s open-source llm-steer-instruct (MIT license, presented at ICLR 2025) computes a steering vector from paired examples — one with the target instruction, one without — and injects it into the residual stream at inference time. No fine-tuning. No retraining. Microsoft GitHub

# Conceptual pattern from llm-steer-instruct

steer_vector = compute_vector(model, with_instruction, without_instruction)

output = generate_with_steering(model, prompt, steer_vector, alpha=1.5)

See official repo for full implementation — simplified illustration of the patternIt has been tested on Phi 3.5, Gemma, and Mistral against IFEval benchmark tasks. If your model is close but not quite compliant on specific instruction types, this is a more surgical fix than a full model swap. Microsoft GitHub

Step 7 — Upgrade Model Tier If Soft Adherence Persists Beyond Step 6

If you’ve run all six steps and still see systematic hard-constraint compliance failures, the model simply isn’t strong enough for your task profile. Use the Scale AI SEAL leaderboard as your upgrade decision matrix — it’s the most rigorous public ranking for LLM-as-a-judge evaluation on instruction following tasks. Scale AI SEAL

| Model | IFEval Score | Best Use Case |

|---|---|---|

| DeepSeek R1 | 87.75% | Reasoning + long-form constraints |

| Llama 3.1 405B Instruct | 84.85% | General instruction following |

| Mistral Large 2 | 82.81% | Structured business prompts |

| DeepSeek V3 | 82.34% | Fast, high-throughput pipelines |

My practical rule: if your required task adherence is above 90%, start with DeepSeek R1 and force JSON mode. If you’re below that threshold or running latency-sensitive workloads, Llama 3.1 405B Instruct is the better tradeoff. For a full breakdown of open source model tradeoffs across categories, see the complete guide on the AIQnAHub Troubleshoot hub.

Before vs. After: Seeing Atomic Constraint Prompting in Practice

This is the exact pattern that moved a broken content generation pipeline I reviewed from ~61% format compliance to near-100% — just by rewriting the prompt, no model change:

❌ Bad Prompt: “Write a short product description for a coffee maker.”

✅ Fixed Prompt with Atomic Constraints: “Write a product description for a coffee maker. Format: exactly 2 sentences. Sentence 1: state one feature and one benefit. Sentence 2: end with a call to action. Do not use the word ‘amazing’.”

The second prompt has zero ambiguity. Every instruction is programmatically verifiable. The model has no decision space to fill with hallucinated formatting choices. This is the exact constraint structure that the IFEval benchmark rewards — and it works identically across DeepSeek, Qwen, Mistral, and Llama instruct variants. Palantir

Open Source LLM Prompt Adherence — Frequently Asked Questions

Q1: What is the best open source LLM for following system prompts in 2026?

DeepSeek R1 ranks #1 among open-weight models with an 87.75% instruction following score on the Scale AI SEAL leaderboard — the most rigorous public benchmark for this specific capability. It is the strongest choice for strict system prompt compliance in self-hosted production deployments. For JSON schema tasks specifically, Qwen 2.5 is the top performer at 99.2% adherence in structured output mode. Scale AI SEAL

Q2: Why does my Mistral model keep ignoring part of my prompt?

The most common cause is a malformed prompt template. Mistral instruct models require the exact [INST]...[/INST] delimiter syntax — anything else causes “instruction alignment escape,” documented at a 4.7–7.2% UNKNOWN/unusable response rate across benchmark datasets. Copy the template verbatim from the official Mistral model card on Hugging Face, and verify your system message is placed inside the first [INST] block, not outside it. arXiv

Q3: What is IFEval and how does it measure open source LLM best prompt adherence?

IFEval (Instruction-Following Evaluation) is an open benchmark that tests language models exclusively on programmatically verifiable instructions — for example: “include the word X exactly once,” “respond in exactly N sentences,” “do not use bullet points.” Unlike human evaluation, IFEval produces hard pass/fail scores per instruction type, making it the most reproducible and reliable diagnostic for prompt adherence in open-weight models. Running it on your specific model takes under 30 minutes with the lm-eval library. Scale AI SEAL

Q4: Can I improve prompt adherence without switching models?

Yes — and in most cases you should exhaust prompt-side fixes before touching model selection. Switching from a base to an instruct checkpoint, matching the native prompt template exactly, and replacing vague instructions with atomic constraints resolves the majority of adherence failures I’ve seen in production reviews. Steps 1–3 in this guide cost zero compute and zero budget. Palantir

Q5: What is activation steering and does it help with prompt adherence?

Activation steering is a runtime inference technique — used in Microsoft’s open-source llm-steer-instruct tool — that injects a computed directional vector into the model’s residual stream to bias generation toward instruction-compliant outputs. It requires no fine-tuning, runs at inference time, and has been validated on IFEval tasks across Phi 3.5, Gemma, and Mistral. It’s the right tool when your model is close but systematically failing on one or two constraint types that prompt rewrites haven’t solved. Microsoft GitHub

References & Sources

- Scale AI SEAL — Instruction-Following Evaluation Leaderboard

- Microsoft Research — llm-steer-instruct (GitHub)

- arXiv — Measuring LLMs’ Adherence to Task Evaluation Instructions

- PMC/NIH — Clinical LLM Structured Prompting Benchmark

- Palantir — Best Practices for LLM Prompt Engineering

- HuggingFace — 10 Best Open-Source LLM Models

- HuggingFace — Mistral Large Instruct Model Card

Leave a Reply