Transparency Note: This article contains no affiliate products. All recommendations are purely educational and editorially independent.

If you’ve ever landed on an AI tutorial, a Reddit thread, or a LinkedIn post where everyone casually references “Karpathy” as if it’s common knowledge — and you quietly nodded along while having no idea what it meant — you’re not alone. I’ve spoken with dozens of self-taught developers and junior ML engineers who feel this exact pressure: a quiet dread that not knowing the name means they’ve already fallen behind. The truth? You haven’t. You just need the right context. Let me walk you through it.

What Is Karpathy AI? (The Direct Answer)

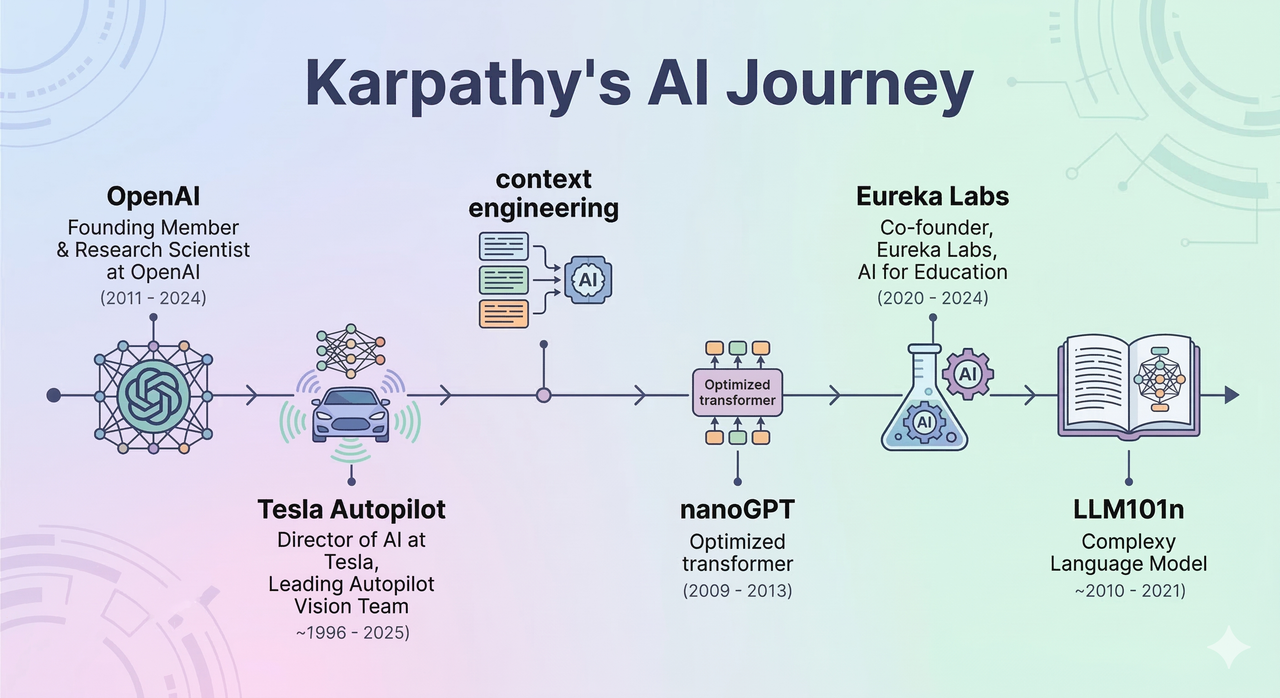

“Karpathy AI” is not a product, company, or framework. It refers to the body of work, ideas, and educational content created by Andrej Karpathy — a Slovakian-Canadian machine learning researcher, founding member of OpenAI, former Director of AI at Tesla Autopilot, and now founder of Eureka Labs. His ecosystem includes tools like nanoGPT, courses like LLM101n, and concepts like context engineering that are reshaping how developers interact with large language models. When people say “Karpathy AI,” they mean his influence on the AI field — and it is enormous.

Who Is Andrej Karpathy, Really?

One thing I’ve noticed is that most learners treat Karpathy as just a “YouTube guy.” That dramatically undersells him. He is one of the most credentialed practitioners alive who also chooses to teach — a rare combination. For a broader look at other major figures shaping AI today, see our guide to AI researchers you should follow.

Early Life and Deep Learning Roots

Born in Bratislava, Slovakia, Karpathy moved to Toronto, Canada, at age 15. He earned his undergraduate degree in Computer Science from the University of Toronto, where he studied under Geoffrey Hinton — the godfather of deep learning himself. Andrej Karpathy – Wikipedia He then completed his Ph.D. at Stanford University under Fei-Fei Li, focusing on deep neural networks for image captioning and multimodal understanding. During that PhD, he co-created and taught CS231n — Stanford’s first-ever deep learning course — which grew from 150 students in 2015 to over 750 by 2017. That teaching instinct was always there.

OpenAI and Tesla Autopilot — From Research to Real-World Systems

From 2016 to 2017, Karpathy served as a Research Scientist at OpenAI, one of its founding members, working on generative models and deep reinforcement learning. He then became Director of AI at Tesla, leading the team responsible for all neural networks powering Tesla Autopilot — a real-time, safety-critical, at-scale vision system. This is not a background built in notebooks and Kaggle competitions. This is someone who trained models that drove actual cars on real roads. In my experience, that practical credibility is precisely why his tutorials resonate differently from most online courses. Andrej Karpathy Official Site

From OpenAI & Tesla to AI Education

In 2023, Karpathy rejoined OpenAI briefly before departing to focus on what I’d argue is his most impactful chapter yet: making world-class AI education accessible to everyone.

Launch of Eureka Labs and the “Zero to Hero” LLM Series

In July 2024, Karpathy publicly launched Eureka Labs — an AI-native education startup with a mission to modernize how humans learn in the age of AI. The flagship product is LLM101n, an undergraduate-level course that takes students from zero through building, training, fine-tuning, and interacting with their own language model. Think of it as a structured curriculum where you actually build the thing, not just consume theory. His “Zero to Hero” YouTube series (covering backpropagation, tokenization, attention mechanisms, and more) has become mandatory viewing for anyone serious about understanding LLMs from first principles. Andrej Karpathy – Wikipedia

nanoGPT and nanochat as Beginner-Friendly Teaching Tools

nanoGPT is arguably Karpathy’s most shared codebase. It is a minimal, readable, hackable re-implementation of GPT-2/GPT-3-style transformer training — designed specifically so learners can read every line and understand it. I’ve personally recommended it to junior Python developers as “the textbook that runs.” More recently, he evolved this into nanochat — a complete end-to-end pipeline (tokenization → pretraining → supervised fine-tuning → RLHF with GRPO → web UI deployment) that lets you train your own ChatGPT-style model for as little as $100 on an 8×H100 GPU node in 4 hours. nanochat will also serve as the capstone project for LLM101n at Eureka Labs.

Modern Projects: Autoresearch and Context Engineering

This is where things get genuinely exciting — and where Karpathy is pushing the frontier of what individual researchers and developers can do.

What “Autoresearch” Is and How It Automates Experimentation

In March 2026, Karpathy released autoresearch — an open-source agentic ML framework that enables AI agents to autonomously run machine learning experiments while you sleep. The system follows a recursive loop:

- Literature Review — The agent ingests research papers via ArXiv API

- Hypothesis Generation — Proposes potential improvements to investigate

- Code Generation — Writes experimental code autonomously

- Execution — Runs experiments on available cloud hardware

- Analysis — Evaluates results and synthesizes findings

- Iteration — Restarts the cycle with updated hypotheses

In his demo, Karpathy showed autoresearch improving a character-level language model on the Shakespeare dataset using nanoGPT. The system runs on Anthropic’s Claude Sonnet and Claude Opus for reasoning and code generation — a deliberate choice, Karpathy noted, for Claude’s coding performance. What I find most significant here: this compresses what used to take research teams weeks of iteration into a single overnight compute run.

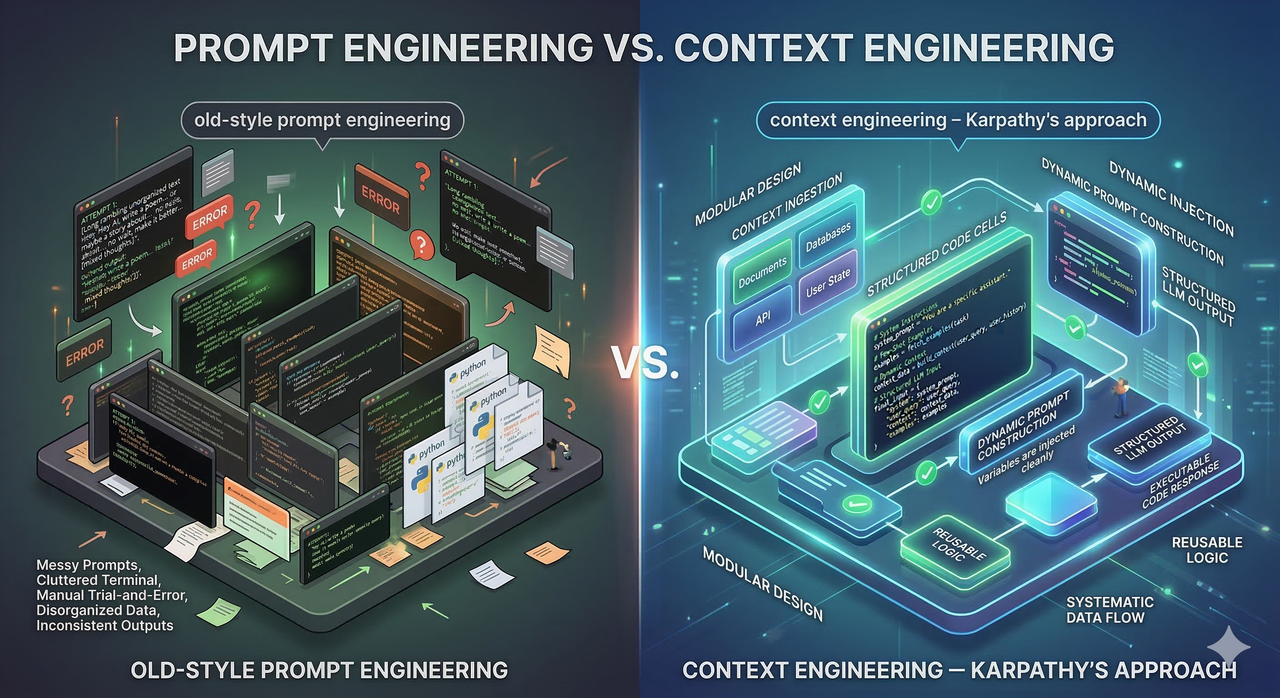

Why Context Engineering Beats Generic Prompt Tuning

The term “prompt engineering” has dominated AI circles for two years. Karpathy publicly pushed back on this framing in 2025, coining “context engineering” as the more accurate descriptor for what serious production systems actually require. His exact words: “In every industrial-strength LLM app, context engineering is the delicate art and science of filling the context window with just the right information for the next step.”

The distinction matters practically:

- Prompt engineering = tweaking the wording of a single text input

- Context engineering = architecting the entire information environment the model sees — memory, retrieved documents, tool outputs, examples, instructions — and making sure that window contains exactly what the model needs for the next inference step

For developers building real applications (not demos), this shift in framing is the difference between an LLM that occasionally works and one that consistently performs.

Why “Karpathy AI” Feels Confusing

I hear this confusion constantly in developer communities, and I want to name it directly so you can move past it.

Common Misconceptions

When people search “what is karpathy ai,” they’re often confused because:

- It sounds like a product name — “Karpathy AI” feels like it could be a startup or SaaS tool

- They’ve seen it paired with frameworks — nanoGPT and autoresearch are real codebases, but they’re educational tools, not commercial platforms

- They assume it’s an API — It isn’t. You can’t “use Karpathy AI” the way you use the OpenAI API

The clearest reframe: “Karpathy AI” = an ecosystem of ideas, open-source code, and courses — not a product you purchase or a service you subscribe to. His GitHub, YouTube channel, and X/Twitter feed are the primary surfaces where this ecosystem lives. Andrej Karpathy X (Twitter)

How to Search Smarter Around His Work

When you’re trying to find specific resources, pair his name with semantic terms that point to the actual artifact:

karpathy nanogpt github→ the minimal GPT codebasekarpathy zero to hero youtube→ the foundational LLM lecture serieskarpathy llm101n eureka labs→ the upcoming structured coursekarpathy autoresearch github→ the agentic ML experiment frameworkkarpathy context engineering→ his arguments for redesigning how LLM apps handle input

How to Leverage Karpathy AI in Your Own Learning

Here is the practical roadmap I’d give to any junior Python developer or self-taught ML engineer today.

Step-by-Step Learning Path

Follow this sequence — it maps to how understanding actually builds:

- Start with “Zero to Hero” (YouTube) — Watch his backpropagation, tokenization, and transformer-from-scratch videos in order. These are the conceptual foundation.

- Clone and read nanoGPT — Don’t run it first. Read it. Every line. If you can follow the code, you understand transformers.

- Experiment with nanochat — Try the $100 ChatGPT training run if budget allows, or trace through the repo to understand the full LLM lifecycle (SFT, GRPO, KV caching).

- Enroll in LLM101n (Eureka Labs) — When available, this will be the most structured path from fundamentals to deployment.

- Adopt context engineering thinking — Before building any LLM-powered feature, ask: “What exactly will be in the model’s context window at inference time?” This question alone elevates your designs.

Defeating Imposter Syndrome With a Structured Curriculum

The hidden fear driving most “what is karpathy ai” searches is: “Everyone else seems to already know this, and I don’t.” Here is what I’ve found to be true after spending time in AI communities: most people invoking Karpathy’s name have only skimmed the surface. They’ve watched one video or cloned nanoGPT without reading it. If you follow the path above sequentially, you will have a deeper working understanding than the majority of people who casually drop his name. The curriculum is public, free, and genuinely excellent — the only barrier is the fear itself.

Quick Reference: Karpathy AI At a Glance

| Dimension | Detail |

|---|---|

| Who | Andrej Karpathy — Slovakian-Canadian AI researcher & educator |

| Key Roles | Founding member of OpenAI; Director of AI at Tesla Autopilot; Founder of Eureka Labs |

| Key Open-Source Tools | nanoGPT, nanochat, autoresearch |

| Key Course | LLM101n (via Eureka Labs); “Zero to Hero” YouTube series |

| Key Concept Coined | Context engineering (vs. prompt engineering) |

| Where to Follow | karpathy.ai · github.com/karpathy · x.com/karpathy |

| Why It Matters | He is the rare practitioner who makes frontier-level AI knowledge genuinely accessible |

Leave a Reply