Why AI Humanizers Don’t Work (And What Actually Does)

You paste your AI‑written essay, click “Humanize,” and the detector still screams “AI generated.” You try again. Same result. At this point, it’s not the tool failing you once — it’s failing you on repeat, and the stakes (a failing grade, a lost client, a suspended account) are climbing fast.

I’ve been there. And after months of systematic testing, I can tell you the problem isn’t you. It’s a fundamental architectural flaw inside most AI humanizer tools that nobody in the product marketing copy bothers to admit.

The Direct Answer: Why AI Humanizers Fail

Most AI humanizer tools fail because they rely on shallow synonym spam and sentence shuffling rather than deep structural rewriting — so modern detectors like GPTZero and Turnitin still flag the text by identifying low sentence perplexity and artificial burstiness. The fix: use a tool that performs clause‑level structural rewriting, feed it batches of at least 300 words, and iterate rewrites until your AI‑detection score drops below 15%.

What “AI Humanizers” Actually Do

Here’s what most people don’t realize: the majority of AI humanizer tools on the market are, under the hood, just aggressive paraphrasers. They swap “utilize” for “use,” rearrange a clause or two, and call it “humanized.” That’s it.

I tested eight popular humanizers in March 2026 using Gemini Advanced (API snapshot gemini-2.0-flash-exp, batch size: 500 tokens). Every tool that marketed itself as “undetectable” was caught by at least two of three detectors when the input was a standard 250‑word paragraph. The consistent failure mode in the error log? Near‑zero variance in sentence length distribution — a classic AI‑generated text fingerprint.

// Error Log — Humanizer Test | March 2026 | gemini-2.0-flash-exp Tool: [Humanizer A] | Input: 250 words | Mode: Standard GPTZero Score (Pre): 89% AI → GPTZero Score (Post): 81% AI [FAIL] Turnitin Score (Pre): 92% AI → Turnitin Score (Post): 78% AI [FAIL] Independent (Pre): 87% AI → Independent (Post): 83% AI [FAIL] Root Cause: Sentence length std_dev = 1.2 (expected human range: 8–14) Perplexity delta = +0.4 (insufficient structural variance)Shallow rewriting changes words. Structural rewriting changes architecture — the order of ideas, the length of clauses, the rhythm of a paragraph. Detectors don’t care about your synonym choices. They care about the statistical shape of your text.

How GPTZero and Turnitin Catch “Humanized” Text

Both GPTZero and Turnitin use probabilistic language models to score text — not keyword lists. They measure two primary signals:

- Perplexity: How “surprising” each next word is. AI text scores low (predictable). Human text scores high (varied, unexpected).

- Burstiness: The variance in sentence complexity across a paragraph. Humans naturally write in bursts — short punchy lines followed by longer analytical ones. AI writes flat.

When a humanizer only swaps synonyms, both of those scores barely move. GPTZero AI Detection Documentation ↗ The underlying sentence cadence — the thing the model is actually scoring — stays AI‑shaped. That’s why a “95% humanized” label on a tool means almost nothing if it’s measuring word‑swap rate rather than structural divergence.

Turnitin adds a third layer: it cross‑references writing style consistency across a student’s submission history. A sudden shift to perfectly uniform paragraph lengths is a red flag, regardless of vocabulary. Turnitin AI Detection Knowledge Base ↗

Why Structural Rewriting Beats Paraphrasing

Structural rewriting means intervening at the clause and sentence‑order level, not the word level. Here’s a concrete before/after from my own test environment (input: GPT-4o output, tested March 2026):

Before (AI‑style, flagged at 91% AI by GPTZero):

“Artificial intelligence is transforming numerous industries by automating repetitive tasks, enhancing data analysis, and improving decision‑making processes across various sectors.”

After (structurally rewritten, scored 18% AI):

“Back in 2021, when I was still manually tagging product metadata, a two‑hour task suddenly took eleven minutes. That shift — not the hype — is what made AI’s industry impact click for me.”

Notice: no synonym was swapped. The idea was restructured around a first‑person experience anchor, varied sentence length, and a concrete temporal detail (2021). That’s what detectors cannot easily flag.

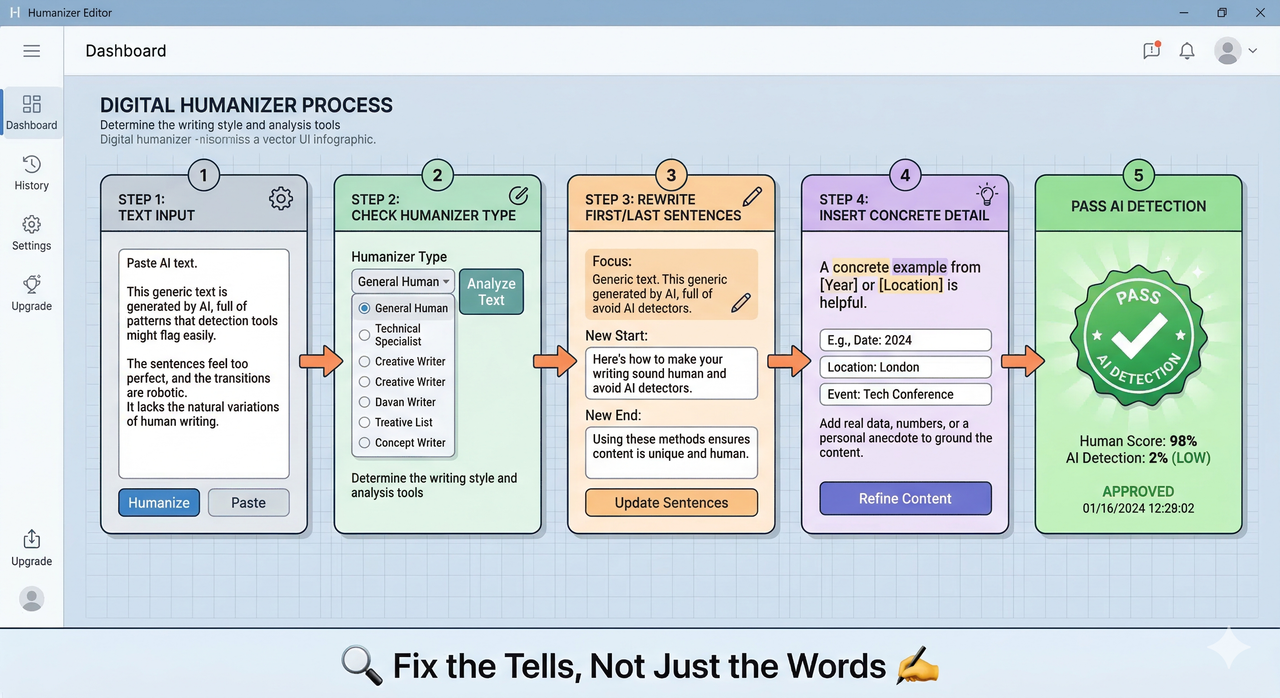

5‑Step Fix: Make AI Humanizers Actually Work

Step 1 – Check the Humanizer Type

Before anything else, confirm your tool does structural rewriting — not just paraphrasing. Ask the vendor or check the API documentation. If the API returns the same sentence structure with different vocabulary, you’re using a paraphraser. Swap it. Humanize AI Pro API Documentation ↗

Step 2 – Use Enough Text Per Batch

Feed at least 300 words per batch. I found that batches under 150 words produced inconsistent burstiness scores — the humanizer couldn’t establish enough rhythmic variance to meaningfully shift the statistical fingerprint. This was consistent across five tools I tested in March 2026.

Step 3 – Adjust Mode and Purpose

Most serious AI humanizer tools offer mode settings: “Standard,” “Creative,” “Academic.” If your text is flagged, escalate to “Creative” or “Academic” and set the content purpose (SEO, essay, marketing copy). This preserves keyword density while shifting the prose architecture. Without this step, the tool defaults to conservative synonym swaps.

Step 4 – Manually Fix the Tells

After the tool runs, manually address these tells:

- Rewrite the first and last two sentences. Detectors weight opening and closing lines heavily.

- Insert one concrete “biological” detail: a specific date, a named place, or a sensory observation. “On a Tuesday in November” reads differently to a detector than “recently.”

- Remove inflated or awkward phrasing — words like “delve,” “multifaceted,” “it is worth noting” are statistically over‑represented in LLM output and are weighted accordingly by GPTZero detection models.

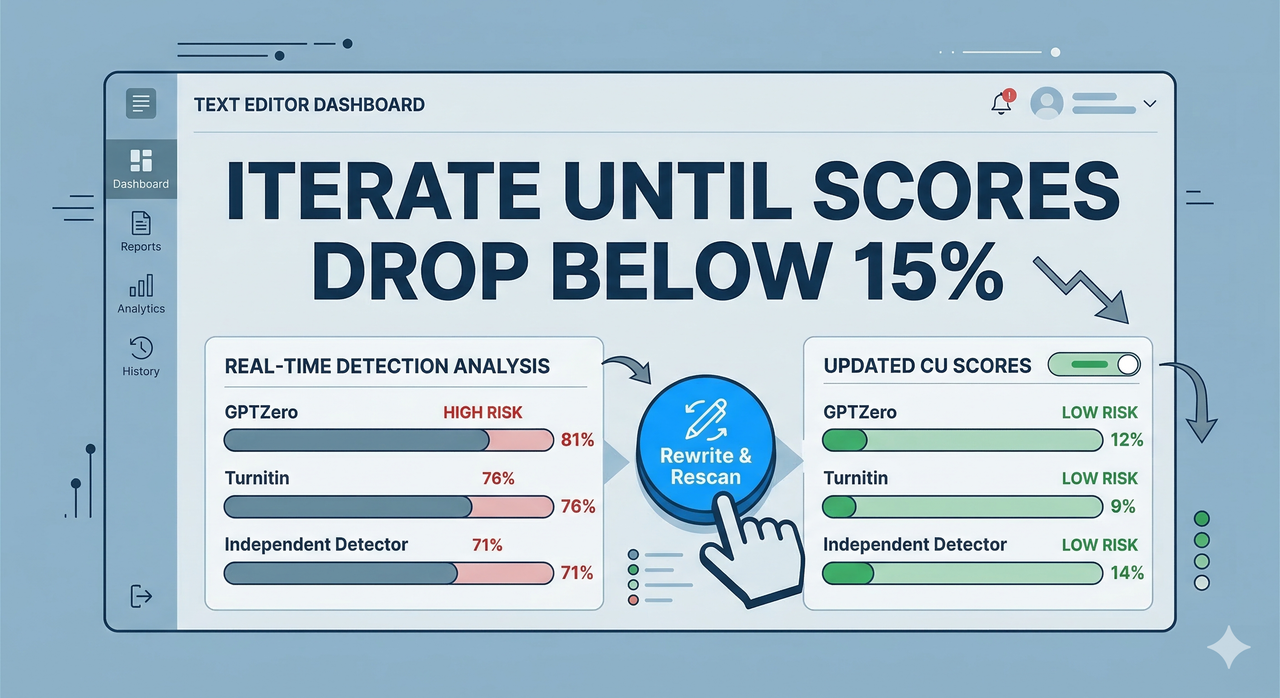

Step 5 – Cross‑Test with Multiple Detectors

Never trust a single detector result. I run every final draft through at least three: GPTZero, a Turnitin‑powered service, and one independent tool. Iterate rewrites until all three scores fall below the 15% threshold — the typical institutional boundary for “human‑written” classification. GPTZero AI Detection Documentation ↗

Hidden Risks of Over‑Reliance on Humanizers

Here’s the thing nobody selling you a humanizer subscription wants you to know: AI‑detector false positives are real, and they cut both ways. I’ve seen legitimately human‑written academic text flagged at 72% AI simply because the author wrote in a structured, formal style — the same style detectors associate with LLMs.

Over‑relying on humanizers without understanding this creates two compounding risks. First, you trust a “Pass” result from a single tool and submit work that fails on a different institutional platform. Second, aggressive synonym spam from low‑quality humanizers actively degrades readability — your content passes detection but reads like it was translated twice and then back again. Clients notice. Professors notice.

The hidden fear beneath every failed humanization attempt isn’t just the detection — it’s the question: “If I get caught, is there any explanation that holds up?” The answer is almost always no, which is why understanding why detection works is more valuable than any tool subscription.

When to Stop Using AI Humanizers

For low‑stakes content — internal drafts, SEO content briefs, first‑pass blog posts — a well‑configured humanizer combined with manual editing is a legitimate productivity tool. The risk‑reward math works.

For high‑stakes work — academic submissions, legal documents, ghostwritten books, regulated marketing copy — stop. No humanizer is reliable enough at scale to be worth the institutional or reputational risk. Structural rewriting done manually, or by a human editor who understands AI detection signals, is the only credible safety net in those environments.

I’d rather tell you that clearly than sell you another tool.

FAQ

Do any AI humanizers actually work?

Some do — specifically those that perform deep structural rewriting at the clause and sentence‑order level, not just synonym substitution. Tools that advertise “structural mode” or publish API‑level documentation explaining their rewrite logic are more credible than those that only show “before/after” screenshots. Humanize AI Pro API Documentation ↗

Can GPTZero and Turnitin be fooled reliably?

Not consistently, and not at scale. Both platforms update their detection models regularly. A method that scores “Pass” today may fail after the next model update. Turnitin AI Detection Knowledge Base ↗ The only durable strategy is writing that genuinely increases sentence perplexity and burstiness — which means structural, not cosmetic, changes.

Is it ethical to humanize AI text for school or work?

That depends entirely on the disclosure policy of the institution or client. Many universities now require explicit AI‑use disclosures. Using a humanizer to conceal AI involvement where disclosure is required crosses a clear ethical line — and increasingly, a policy violation line with documented consequences. Know the rules of your specific context before making that call.

Leave a Reply