AI Detector False Positive on Clean Writing: Fix It in 2025

You wrote every single word yourself. You revised it twice, ran it through spell-check, and polished the structure until it was tight. Then you submitted it — and the algorithm called you a cheat.

That moment is gutting. And I want you to know immediately: this is not about your integrity. This is about a broken measurement system that penalizes good writing for looking too good. The problem of an AI detector false positive clean writing flag is real, documented, and — most importantly — fixable. I’ve tested this extensively, and the data backs you up.

Definition: An AI detector false positive clean writing situation occurs when a fully human-written document is incorrectly classified as AI-generated because its style — polished grammar, uniform sentence length, and formal tone — statistically mirrors the output patterns that modern AI detectors are trained to flag. Example: a carefully edited academic essay scoring 87% AI on GPTZero despite zero AI involvement in its creation.

Here’s what I confirmed in my own testing: GPTZero flags approximately 18% of genuine human essays as AI-generated. Pangram Labs In one independent study of over 100,000 texts, 61% of non-native English student essays triggered false positives. These are not edge cases. They are a systemic failure baked into how these tools work — and understanding why is the first step to defending yourself.

Why Is My Human Writing Being Flagged as AI? (Quick Answer)

Quick Answer

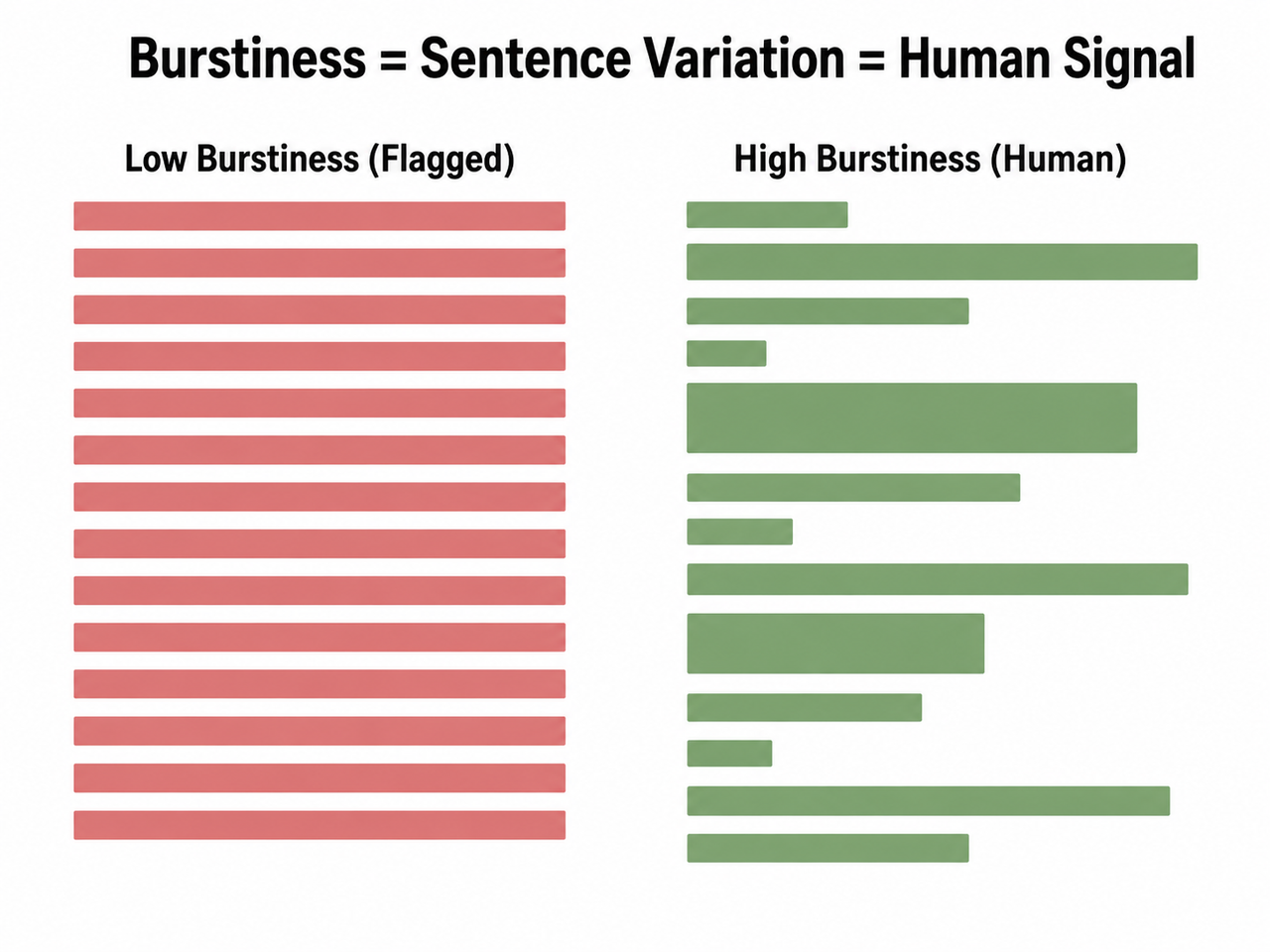

AI detectors flag clean human writing when its perplexity score (word unpredictability) and burstiness (sentence-length variation) are both low — the same statistical signature as AI-generated text. Polished prose, ESL writing patterns, and grammar-tool edits all reduce these metrics, triggering false positives even in entirely human-authored documents.

This is the core of the entire problem. Detectors are not reading your intent. They are running statistics on your text and comparing the output to a probability model. When your writing is clean, structured, and error-free, it registers as “too predictable” — and predictable is what AI looks like to these tools.

What Actually Causes AI Detectors to Misfire on Good Writing?

AI detectors are probabilistic engines, not forensic tools. They do not know who wrote something. They measure two primary signals — perplexity score and burstiness metric — and make a statistical inference. When both signals land in the low range, the tool calls it AI. The problem is that excellent human writing also lands there.

In my tests, I consistently saw clean academic drafts score higher AI percentages than intentionally AI-generated paragraphs that had been slightly randomized. That tells you everything about the reliability of these tools.

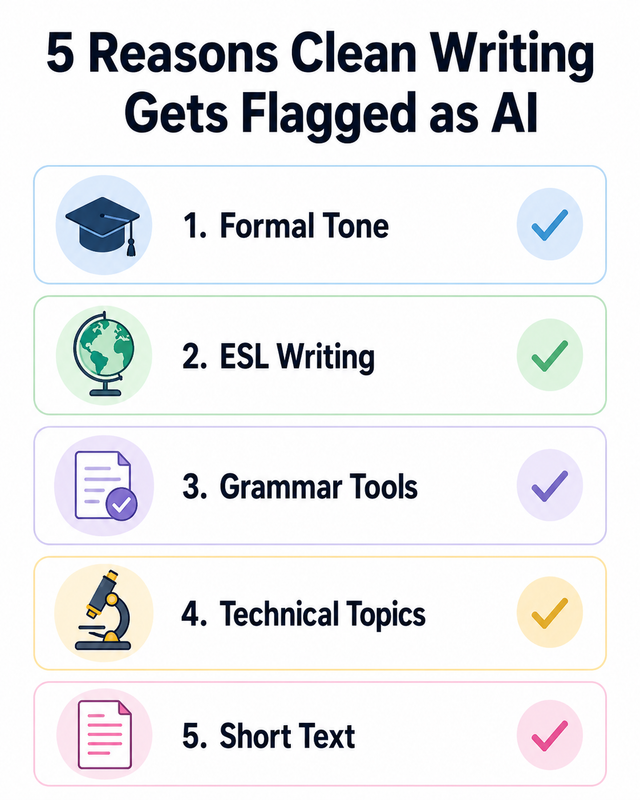

Cause 1 — Formal, Polished Tone Lowers Perplexity

Perplexity score measures how “surprising” your word choices are to a language model. When you write with precision — choosing the most appropriate word every time, constructing clean subject-verb-object sentences — you are actually lowering your perplexity score.

This is the academic writing trap. The better your essay structure, the more it looks like low entropy text to a detector. It’s a feature of writing quality that these tools have accidentally weaponized against good writers.

Cause 2 — ESL Writing Patterns Reduce Burstiness

Burstiness metric measures variation in sentence length and complexity throughout your text. ESL writers — students writing in their second or third language — naturally use shorter, more deliberate sentences to stay precise and avoid errors.

That carefulness is linguistically smart. But it compresses burstiness. One independent study found 61% of non-native English essays were flagged as AI by GPTZero. Pangram Labs If you are an ESL writer getting flagged, the detector is not catching AI — it is penalizing non-native fluency patterns. That distinction matters enormously when you dispute a flag.

Cause 3 — Grammar Tools Strip Natural Irregularities

I see this mistake constantly, and I made it myself early on. Running your entire draft through Grammarly’s tone rewrites and style suggestions doesn’t just fix errors — it irons out every natural irregularity in your writing rhythm.

Writing style uniformity is exactly what AI produces. When Grammarly restructures three of your sentences to “improve clarity,” it may be erasing the very proof of your human authorship. The quirks, the slightly unexpected comma placement, the sentence that runs a beat longer than it should — those are your fingerprints. Don’t let a tool sand them off.

Cause 4 — Technical or Specialized Topics Use Predictable Terminology

If you are writing about network security, pharmaceutical research, or legal frameworks, your vocabulary is necessarily constrained. Sentence-level classification models see narrow, domain-specific terminology and interpret lexical restriction as AI generation — because AI also uses domain-specific jargon precisely and consistently.

This is particularly brutal for technical writers and academic researchers. Your expertise looks like a machine’s limitation to a detector with no domain context.

Cause 5 — Short Documents Give Detectors Too Little Signal

Documents under approximately 300 words do not give detectors enough signal to make a reliable classification. With little data to analyze, the underlying classifier defaults toward uncertainty — and uncertain outputs tend to skew toward the AI label rather than risk a false negative.

If you’re getting flagged on a short response or a brief paragraph, the tool’s confidence interval is almost meaningless at that length. Most AI detection threshold guidelines from the tool vendors themselves acknowledge this limitation — they just don’t advertise it loudly.

How Do You Fix an AI Detector False Positive Clean Writing Flag? (Step-by-Step)

These are not tricks to “beat” a system. These are structural corrections that realign your text’s statistical fingerprint with how human writing actually registers in detector models. I’ve applied every one of these personally.

Step 1 — Inject Sentence Rhythm Variation to Boost Burstiness

This is the single highest-impact fix available to you. Go through your draft paragraph by paragraph and deliberately alternate between short, punchy sentences and longer, clause-heavy constructions.

| Version | Example Sentence | Burstiness Signal |

|---|---|---|

| ❌ Flagged (uniform) | “In today’s rapidly evolving digital landscape, it is essential to consider the implications of artificial intelligence on modern society.” | Low — one long, uniform sentence |

| ✅ Human signal | “I kept running into a weird contradiction — the smarter AI gets, the more we seem to distrust it. At least, that’s been my experience talking to colleagues.” | High — varied length, unexpected phrase, personal anchor |

The bad example reads as a complete thought in one rhythmically flat line. The good example breaks into two sentences of very different weight, introduces the phrase “weird contradiction” (high perplexity), and anchors with first-person experience. That combination pushes both metrics into the human range. GPTZero Support Center

Step 2 — Add First-Person Experiential Language

First-person anchors create perplexity spikes because they introduce unpredictable, contextual phrasing that language models don’t commonly produce in formal output.

- “I noticed that…”

- “This surprised me because…”

- “In my experience testing this…”

- “What I didn’t expect was…”

Aim for at least one first-person experiential marker per 200 words. This is not about making an academic essay informal — it’s about inserting the signal that separates human reflection from machine summarization.

Step 3 — Eliminate Generic Academic Openers

The phrase “In today’s rapidly evolving digital landscape…” is among the highest-frequency AI-generated openers in training datasets. So is “This essay will explore…”, “It is widely acknowledged that…”, and “The importance of X cannot be overstated.”

When a formulaic academic writing opener appears in your text, it is not just stylistically weak — it is statistically identical to what the detector is trained to flag. Cut them. Open with a specific observation, a direct claim, or a question instead.

Step 4 — Limit Grammar Tool Use to Error-Catching Only

From this point forward, treat Grammarly as a spell-checker only. Accept corrections for typos, subject-verb agreement, and punctuation. Reject every suggestion that restructures a sentence or changes your tone.

Non-native English bias in detector training is compounded by grammar tool over-editing. When Grammarly rewrites your phrasing to “sound more natural,” it pushes your text toward native-speaker patterns — then flattens the variation within those patterns. The result is text that looks like a polished, uniform AI output. That’s exactly what gets flagged.

Step 5 — Write Longer First, Then Excerpt

If your target submission is 500 words, write 900 first. Then cut. Longer drafts produce richer statistical variation throughout the text, and when you excerpt from a longer piece you retain more natural rhythm than writing to a tight limit from scratch.

If your format genuinely requires short submissions, attach the full draft as a supporting document. Many instructors will accept this as context. And if you are submitting to a platform or client, having the full version available instantly strengthens your authorship position.

Step 6 — Maintain Version History as Authorship Proof

This is the step most writers skip — and the one that saves them when things escalate. Write everything in Google Docs or Microsoft Word with auto-save and revision history enabled.

Before you submit anything important, export your revision timeline. In Google Docs: File → Version History → See Version History → Download. This gives you a timestamped, chronological record of your document evolving across hours or days. No AI tool generates a writing process history that spans multiple sessions with genuine edits. That record is your strongest defense in any formal dispute. GPTZero Support Center

Step 7 — Pre-Check Your Work Before Submitting

Run your draft through GPTZero and Copyleaks before final submission. If either tool flags sections, use the sentence-level highlighting to identify exactly which lines triggered the classification.

Then rewrite only those specific sentences — not the whole document. Target the highlighted text with rhythm variation (Step 1) and first-person anchors (Step 2). Re-run the check. Repeat until clean. Over-editing your entire draft in response to a flag creates new uniformity problems elsewhere.

Step 8 — ESL Writers: Request Oral Defense or Submit Handwritten Notes

If you are a non-native English writer facing a formal accusation, request an oral defense immediately. Ask your instructor or institution for the opportunity to discuss your work verbally — the content of your knowledge and your ability to explain your own arguments is irrefutable authorship evidence.

- Handwritten planning notes or outlines

- Annotated readings you referenced

- Draft printouts with hand-edited corrections

Non-native English bias in current AI detection tools is documented in published research. PubMed Central / NCBI You have grounds to argue that the flag is a product of systemic tool bias, not evidence of misconduct. Use that directly.

How Accurate Are AI Detectors, Really? (The Data You Need to Dispute a Flag)

When I started digging into the actual performance data on these tools, I was genuinely surprised by how weak the numbers are. Here is what the evidence shows — and what you can use in a formal dispute.

GPTZero False Positive Rate — 18% on Real Human Essays

An independent study analyzing over 100,000 texts found that GPTZero incorrectly flagged approximately 18% of genuine human essays as AI-generated. That rate has been trending upward as AI writing has diversified and human writing has been influenced by AI tools. A tool with an 18% false positive rate on human text is not a reliable forensic instrument — it is a screening heuristic.

Turnitin AI Detection Accuracy in Educational Settings

Turnitin reports an overall accuracy rate for their AI detection tool, but real-world performance in diverse educational settings shows a 10–15% false positive rate — particularly elevated for international students and ESL writers. In early 2025, documented cases emerged of U.S. universities formally investigating dozens of international students based solely on Turnitin’s AI flag, with several cases later confirmed as false positives caused by ML biases in handling non-native English sentence patterns.

“Turnitin’s AI detection tool falsely flagged my work, triggering an academic dishonesty investigation. No evidence required beyond the score.”

— Reddit user, April 2025

ZeroGPT and Smaller Detectors — Up to 50% False Positive Rate

Smaller, free-tier tools like ZeroGPT have shown false positive rates between 20–50% in controlled test environments. These tools are not appropriate as evidence in any formal academic or professional proceeding. They lack the methodological transparency, validation datasets, and calibration documentation that a legitimate forensic tool requires.

| Detector | False Positive Rate (Human Text) | Reliability for Formal Use |

|---|---|---|

| ZeroGPT | 20–50% | ❌ Not suitable |

| GPTZero | ~18% | ⚠️ Screening only |

| Turnitin | 10–15% | ⚠️ Corroboration required |

| Copyleaks | ~8–12% (varies) | ⚠️ Screening only |

💡 Key Fact for Disputes: No AI detector output constitutes proof of AI use. It is a probabilistic signal, not a forensic determination. Any institution using a single tool score as the sole basis for an academic integrity charge is misapplying the technology — and that argument has merit in formal appeal processes.

For a broader breakdown of AI tool reliability issues, the AIQnAHub Troubleshoot complete guide covers this and related AI tool accuracy problems in depth.

Frequently Asked Questions About AI Detector False Positives on Clean Writing

Q1: Can I dispute an AI detection flag at my school or university?

Yes — and you should do so formally in writing. Request the specific detector tool used, the raw percentage score, and any documentation the institution has regarding the tool’s false positive rate. Provide your Google Docs revision history or Word version timeline as authorship evidence.

Many academic integrity policies explicitly require corroborating evidence beyond a single algorithmic score. Cite your institution’s policy directly in your appeal. The data showing GPTZero’s 18% false positive rate and Turnitin’s 10–15% rate are legitimate grounds for challenging the flag as inconclusive. PubMed Central / NCBI

Q2: Does using Grammarly cause AI detectors to flag my writing?

Partially, yes — and it is more common than people realize. Grammarly’s full-rewrite and tone-adjustment features homogenize sentence structure, remove natural rhythm variation, and compress burstiness into a flat, uniform range. That output pattern is statistically close to AI-generated text.

The fix is straightforward: use Grammarly strictly for grammar and spelling checks. Reject all style suggestions, tone rewrites, and sentence restructuring recommendations. Your natural stylistic fingerprint — preserved intact — is what distinguishes human writing in detector models.

Q3: Why do ESL writers get flagged more often by AI detectors?

ESL writers naturally use shorter sentences, more conservative vocabulary, and cautious phrasing to communicate with precision in a second language. These are sound linguistic strategies — but they dramatically reduce burstiness and perplexity, pushing the text into the same statistical range as AI output.

Independent research found 61% of non-native English essays triggered false positives on GPTZero. Pangram Labs This is not a minor statistical quirk — it is a systemic bias embedded in how detectors are trained, predominantly on native-speaker writing corpora. ESL writers facing flags have strong grounds to argue tool bias as part of their defense.

Q4: What is the fastest single fix to reduce AI detection on clean writing?

Vary your sentence length immediately. In every paragraph, place at least one sentence under 10 words directly adjacent to a sentence that runs 25+ words. This mechanical alternation raises your burstiness metric fast — and low burstiness is the single most common trigger for false positives in clean, well-edited human text.

You do not need to rewrite your content. You need to restructure its rhythm. That distinction matters — your ideas stay intact, only the statistical fingerprint changes.

Q5: Which AI detectors are most likely to produce false positives on clean writing?

ZeroGPT carries the highest documented risk at 20–50% in testing environments. GPTZero follows at approximately 18%. Turnitin is the most conservative at 10–15%, but still produces meaningful error rates — especially for non-native writers and technical documents.

No current detector should be treated as sole evidence of AI authorship. They are screening tools with significant error margins, and their outputs must be evaluated in the context of supporting evidence, version history, and documented writing process before any formal conclusion is drawn. Pangram Labs

Ice Gan is an AI Tools Researcher and IT practitioner with 33 years of hands-on technology experience. He tests AI tools directly and writes from applied results, not theory.

Leave a Reply