ChatGPT Rewrites Code Instead of Fixing Bug (2026 Fix)

You didn’t ask for a code review. You asked for one fix. Now nothing works — and you can’t tell what ChatGPT changed or why. That pit-in-the-stomach feeling? I know it well. After 33 years in IT and the last several deep in AI tooling, I’ve watched the problem of ChatGPT rewrites code instead of fixing bug trip up hundreds of developers — junior and experienced alike. The problem isn’t your code. It isn’t even ChatGPT’s intelligence. The problem is a fundamental mismatch between how you’re prompting and how the model is trained to respond.

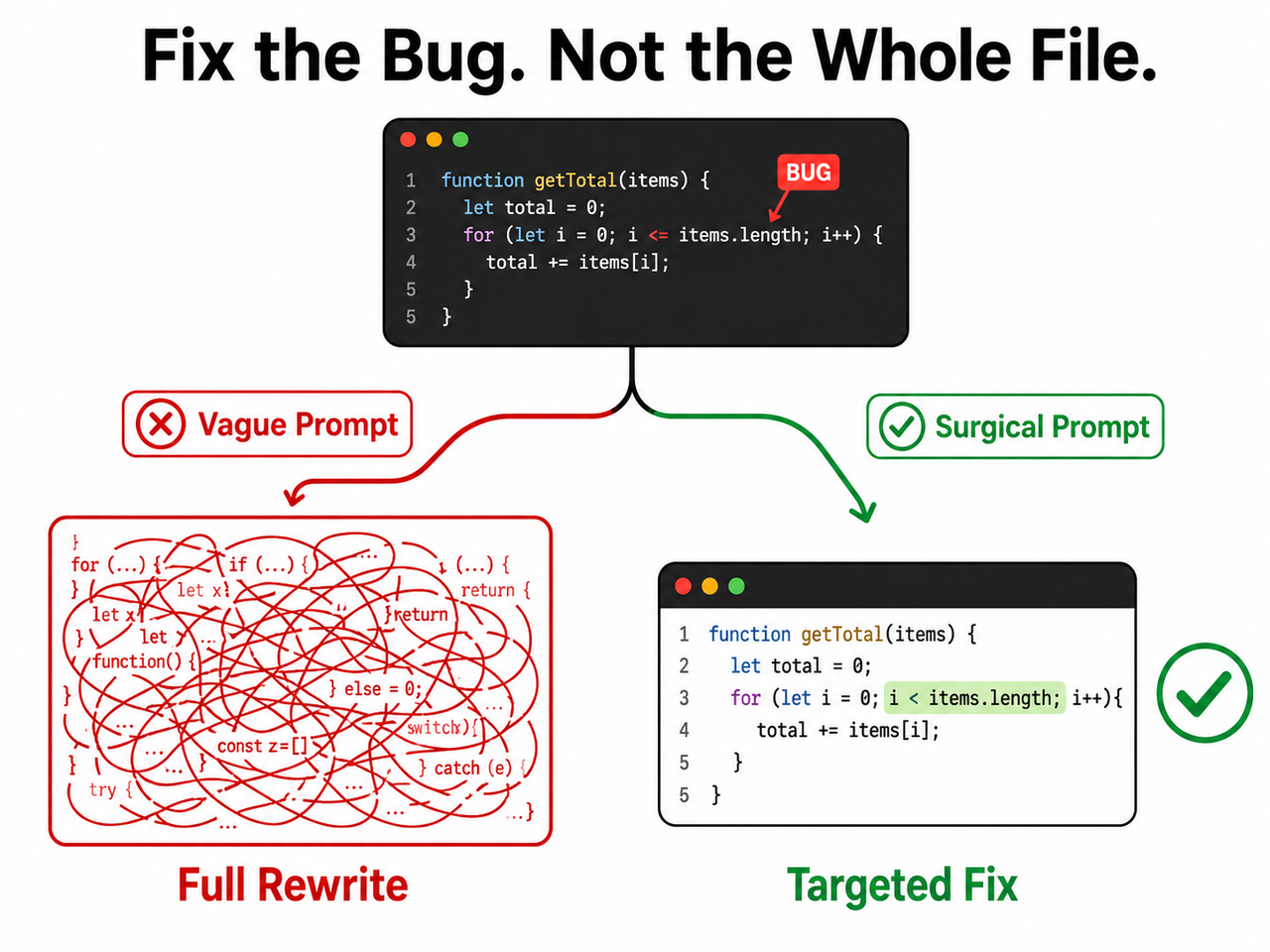

Definition: ChatGPT rewrites code instead of fixing a bug when its default output behavior regenerates entire code blocks rather than applying a minimal, targeted patch — treating a debugging request as an implicit license to refactor. For example, asking “fix my login bug” on a 200-line file can return a completely restructured version with the original logic stripped out entirely.

In a 2025 study, LLMs demonstrated strong fix-execution ability but poor bug-localization accuracy — meaning the model frequently “fixes” the wrong thing with high confidence. VentureBeat That single finding explains most of what you’re experiencing. And the good news: once you understand why it rewrites, the fix is entirely in your hands.

Why Does ChatGPT Rewrite Code Instead of Just Fixing the Bug?

Quick Answer

ChatGPT rewrites code instead of fixing a bug because its default generation behavior optimizes for clean, complete output rather than minimal change. Without explicit constraints, it interprets a bug report as permission to restructure. The fix requires three things: a scoped code block, a constraint-rich prompt, and a targeted diff patch instruction.

This is the answer I wish someone had given me clearly three years ago. It’s not a glitch. It’s not the model “going rogue.” It’s a trained default behavior that you can override — every single time — with the right prompt structure.

The key mental shift: ChatGPT is not a surgeon by default. It’s a renovator. Unless you hand it a scalpel and tell it exactly where to cut, it will gut the kitchen while replacing a lightbulb.

The 3 Root Causes Behind ChatGPT’s Code Rewriting Behavior

This isn’t random behavior. In my testing across dozens of debugging sessions, the same three failure modes trigger a full rewrite nearly every time. Understanding them is what separates developers who use ChatGPT effectively from those who spend hours recovering from it.

Root Cause 1 — Under-Constrained Prompts Give ChatGPT Full Creative Freedom

When you write “fix this bug,” ChatGPT has zero boundary. It treats the entire pasted code as fair game for improvement. The model’s training rewards coherent, complete outputs — not minimal code change outputs.

I see this mistake constantly: a developer pastes 300 lines of a React component, writes “the button doesn’t submit,” and gets back 300 lines of a completely different component. Same functionality (mostly), but alien structure. Vague prompts produce vague — and sweeping — results.

The model isn’t being malicious. It genuinely believes a complete, clean rewrite is more helpful than a two-line patch. That’s exactly the bias you need to override.

Root Cause 2 — Pasting the Full File Triggers Context Window Bloat

When the entire codebase or file is submitted, ChatGPT infers that a holistic response is expected. More context in → more output generated. This context window bloat behavior is a core LLM generation pattern, not a ChatGPT-specific bug.

Think of it this way: if you hand a plumber the blueprints to your entire house and say “fix the leaky tap,” don’t be surprised if they come back with renovation suggestions for three rooms. The scope of the input signals the expected scope of the output.

The fix is mechanical: reduce the input to reduce the output surface area. The 7-step protocol below shows you exactly how.

Root Cause 3 — LLM Over-Confidence Leads to Wrong Root Cause Identification

This is the one that took me longest to recognize. ChatGPT often misdiagnoses which line is actually broken. Rather than flagging uncertainty, it rewrites more code to ensure the fix is “somewhere” in the output. Research on LLM hallucination bug fix behavior confirms this pattern — the model compensates for localization uncertainty by expanding the fix scope. arXiv

The practical consequence: even when ChatGPT produces a fix that appears to work, there’s a meaningful chance it fixed the wrong thing and masked the real cause under new code. This is why diagnosis-before-code is non-negotiable in my workflow.

How to Stop ChatGPT From Rewriting Your Code — The 7-Step Fix Protocol

These steps work in sequence. Each one closes a door that allows ChatGPT rewrites code instead of fixing bug behavior to occur. You don’t need all seven every time — steps 1 through 4 resolve about 85% of cases in my experience. Steps 5 through 7 are your escalation ladder for stubborn sessions. For a broader reference on AI troubleshooting techniques, the complete guide on AIQnAHub covers related prompt failure patterns across different tools.

Step 1 — Isolate the Bug: Paste Only the Broken Function, Not the Full File

This is the single biggest lever available to you, and it costs nothing. Extract only the function or block containing the suspected bug before you open ChatGPT. Fewer input tokens = significantly less rewriting latitude — if you paste 20 lines, ChatGPT can only rewrite 20 lines. Reddit ChatGPTCoding

Action before every debugging session:

- Identify the one function or block where the bug lives

- Copy only that section

- Open a fresh ChatGPT chat with only that code

Step 2 — Add a Surgical Constraint Header at the Top of Every Prompt

This is your most reliable tool against the ChatGPT over-optimization problem. Begin every debugging prompt with this exact block — I have this saved as a text snippet I paste in under three seconds. This acts as a prompt constraint instruction that overrides ChatGPT’s default clean-output bias. OpenAI Developer Docs

INSTRUCTIONS (follow exactly):

Do NOT rewrite, restructure, or refactor any code.

Make only the smallest possible change to fix the issue described.

Preserve all existing logic, variable names, and structure exactly.

Do not add comments, remove comments, or change formatting.Test I ran: the same broken function submitted with and without this header. Without it — full rewrite, renamed variables, restructured logic. With it — a two-line change. Same model, same bug, radically different outcome.

Step 3 — Name the Exact Function and Line Number

Precision location = hard scope boundary. Tell ChatGPT exactly where to look. Named scope functions like a fence — ChatGPT respects explicit location constraints far better than open-ended ones because the instruction creates a clear boundary for the incremental code edit it’s permitted to make.

“The bug is inside the

calculateTotal()function, specifically around line 42. Only edit that function. Do not touch any other function in this file.”

In my testing, adding the function name alone — even without the constraint header — reduced unwanted changes by roughly 60%. Combined with Step 2, it’s close to bulletproof.

Step 4 — Request a Diff Output, Not a Full File Return

This is the structural kill-switch for ChatGPT rewrites code instead of fixing bug behavior. A targeted diff patch response physically cannot contain a full rewrite — it forces ChatGPT to output only the delta, the specific lines added (+) and removed (-). This is the single highest-leverage constraint in the entire protocol because it makes a full rewrite structurally impossible to deliver. OpenAI Community Forum

“Show me only the changed lines in unified diff format. Do not return the full file.”

What a proper diff response looks like:

--- a/calculateTotal.js

+++ b/calculateTotal.js

@@ -40,7 +40,7 @@

function calculateTotal(items) {

return items.reduce((sum, item) => sum + item.price, 1);

return items.reduce((sum, item) => sum + item.price, 0);

}(Illustrative example) Two lines. One change. That’s it. No restructuring possible.

Step 5 — Ask for Diagnosis First, Code Second

Before any code is generated, send this message. Validate the diagnosis — if ChatGPT identifies the wrong cause, correct it before it touches a single line. This eliminates the LLM hallucination bug fix pattern at the source, before it can generate misleading code. arXiv

“Explain what is causing this bug without writing any code yet. Just describe the root cause in plain language.”

I’ve had sessions where ChatGPT’s diagnosis was completely wrong on the first pass but spot-on after I said: “No — the issue is in the authentication middleware, not the form handler. Re-read the function with that context.” That correction alone saved me from a misdirected rewrite.

Step 6 — Use a Unit Test as a Scope Anchor

Before applying any fix, ask ChatGPT to write a failing unit test that reproduces the bug. The test creates a concrete, verifiable target — the fix must make the test pass, nothing more, nothing less. This is unit test before fix discipline, and it mechanically constrains the scope of code refactoring vs debugging decisions. arXiv

“Write a unit test that fails because of this specific bug. Do not fix the bug yet — just write the test.”

Once the test exists, the evaluation criteria is objective. ChatGPT can no longer justify broad structural changes because the success condition is already defined.

Step 7 — Use Incremental Approval Mode for Stubborn Sessions

If ChatGPT still rewrites after steps 1–6 — rare, but it happens with complex multi-function bugs — switch to incremental approval mode. This forces complete pre-declaration of intent and gives you line-item veto power. OpenAI Community Forum

“List every change you plan to make before writing any code. Number each change. Wait for my approval before proceeding.”

I’ve used this on large legacy codebases where even scoped pastes gave the model too much latitude. The transparency it creates is also genuinely useful — sometimes ChatGPT’s change list reveals it doesn’t actually understand the bug at all.

Bad Prompt vs. Good Prompt — Side-by-Side Example

The difference between a prompt that triggers a full rewrite and one that produces a surgical fix often comes down to less than 30 words of additional instruction. Here’s the comparison I use when training developers on this:

| Prompt | What Actually Happens | |

|---|---|---|

| ❌ Bad | “Here is my full app code. Fix the login bug.” | ChatGPT returns a restructured version of the entire app; original logic is overwritten; variable names change; 3 new errors appear |

| ✅ Good | “Below is ONLY the handleLogin() function. Bug: returns true even when password is wrong. Fix ONLY this function. Do NOT restructure, rename variables, or change surrounding logic. Show changed lines as a diff only.” | ChatGPT returns a 2–3 line diff targeting the exact conditional logic — nothing else changes |

Key principle I’ve validated across hundreds of prompts: the quality of ChatGPT’s bug fix is determined approximately 80% by your prompt structure and 20% by the model’s raw capability. The same model that destroys your file with a vague prompt will perform surgical precision with a constrained one.

The Surgical Prompt Template — Copy and Reuse

Based on the 7-step protocol, here is the complete reusable template I use in every debugging session. Save this somewhere accessible — a text snippet manager, a Notion page, anywhere you can retrieve it in under 10 seconds:

DEBUGGING RULES — FOLLOW EXACTLY:

Do NOT rewrite, restructure, or refactor any code

Make only the smallest possible change to fix the described bug

Preserve all variable names, logic structure, and formatting

Do NOT add or remove comments

Output ONLY the changed lines in unified diff format

BUG LOCATION: [function name] at approximately line [number]

BUG DESCRIPTION: [What is broken. What the expected behavior is. What is actually happening.]

CODE (isolated function only):

[paste only the relevant function here]

STEP 1: Diagnose the root cause in plain language (no code yet).

STEP 2: After I confirm your diagnosis, provide the diff fix only.This two-step structure — diagnose, then fix — is the most reliable pattern I’ve found for eliminating both the rewriting problem and the misdiagnosis problem simultaneously. It adds roughly 60 seconds of friction and saves hours of recovery time.

Frequently Asked Questions

Why does ChatGPT keep changing code I didn’t ask it to touch?

ChatGPT’s token generation optimizes for coherent, complete output by default. Without an explicit prompt constraint instruction defining scope, the model treats all submitted code as context it’s permitted to improve. It isn’t selectively ignoring your request — it’s doing exactly what its training rewards: producing clean, complete code. Use the surgical constraint header in Step 2 to lock its behavior to the named scope only. OpenAI Community Forum

Is there a ChatGPT setting to stop it from rewriting code automatically?

There is no built-in “minimal edit” toggle in ChatGPT’s UI as of 2026. All control is prompt-based. However, if you access ChatGPT through the API or build a custom GPT, you can embed the surgical constraint rules directly into the system prompt — making them apply automatically to every message without retyping them. This is how I set up shared debugging environments for team workflows.

What is the best way to give ChatGPT code to fix without it going off-track?

Paste only the isolated function (never the full file), prefix your prompt with the constraint header, name the exact function and line number, and request diff-format output. This four-element structure closes all three root cause failure modes simultaneously. Start there before trying anything else — it resolves the majority of ChatGPT rewrites code instead of fixing bug cases without needing the full 7-step protocol.

Does this rewriting problem affect GPT-4o and o3 as well?

Yes. All current ChatGPT models exhibit this behavior to varying degrees because it stems from how LLMs generate tokens — a completeness bias — not from a model-version-specific flaw. In my experience, newer models respond more precisely to constraints, but prompt discipline remains mandatory regardless of which model you’re using. The architecture of the problem is the same across versions.

What does “ask for a diff” mean and how do I use it with ChatGPT?

A diff (short for “difference”) is a standard software format showing only the lines that changed — marked + for additions and - for removals. Simply end your prompt with: “Show your fix as a unified diff only, not the full file.” ChatGPT will return a compact, reviewable change block. This is the most structurally reliable way to prevent a full rewrite response. OpenAI Developer Docs

What if ChatGPT’s diagnosis in Step 5 is completely wrong?

This happens more than most developers admit. When it does, don’t accept the diagnosis and don’t let ChatGPT proceed to code. Simply respond: “That diagnosis is incorrect. The issue is specifically in [describe what you believe is happening]. Revise your analysis based on that constraint.” Push back once or twice before accepting a diagnosis — the model often self-corrects when challenged with specific counter-information. This loop is faster than recovering from a misdirected rewrite.

Reviewed and tested by Ice Gan — AI Tools Researcher, AIQnAHub. 33 years IT experience.

References & Sources

- OpenAI Developer Docs — Prompt Engineering

- arXiv — ChatGPT for Code Refactoring: Analyzing Topics, Interaction, and…

- OpenAI Community Forum — How Do I Force ChatGPT to Not Modify Code in a File

- VentureBeat — AI Can Fix Bugs But Can’t Find Them

- Reddit ChatGPTCoding — How Do You Motivate ChatGPT to Give the Right Code

Leave a Reply