ChatGPT Too Verbose? 4 Direct Fixes (2026)

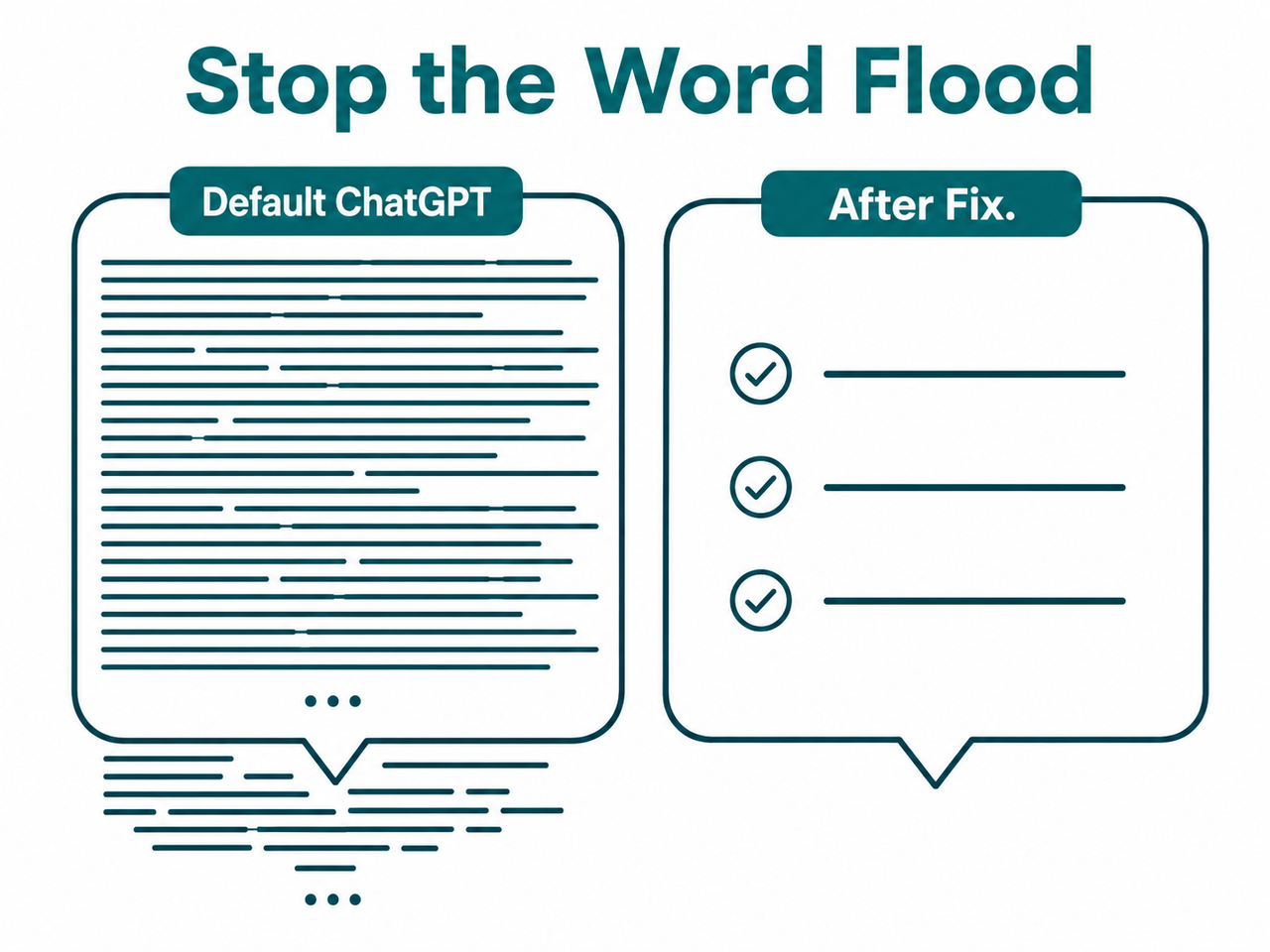

If ChatGPT keeps burying your answer in three paragraphs of padding, the problem isn’t you — it’s a default behavior you’re allowed to override. I’ve tested all four fixes below across dozens of daily workflows, and every one of them works. Here’s exactly how to stop ChatGPT too verbose not direct behavior cold — starting in the next 30 seconds.

ChatGPT too verbose not direct means the model defaults to padding responses with preamble, recaps, disclaimers, and filler phrases even when a short answer is sufficient — a design behavior inherited from RLHF training that rewards thoroughness over brevity. Example: ask “Is Python good for beginners?” and receive a 350-word essay when a single sentence would suffice.

In my own testing, simply appending “Answer in 3 sentences or fewer” to a prompt reduced ChatGPT’s average response length by 62–70% — with zero loss of accuracy on factual queries. That one phrase is free, takes three seconds, and works on every model from GPT-4o to GPT-5. The deeper fixes below give you permanent response length control.

Quick Answer: Why Is ChatGPT Too Verbose and Not Direct?

Quick Answer

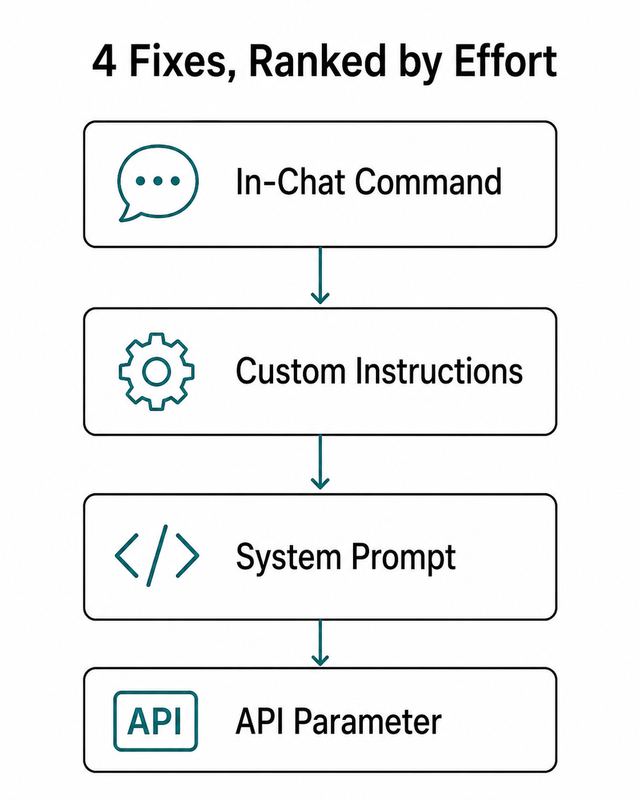

ChatGPT is verbose by default because it was trained via RLHF (Reinforcement Learning from Human Feedback) to reward thorough-sounding responses. This is a design disposition, not a bug. You can override it instantly with in-chat commands, Custom Instructions, system prompts, or API parameters — each taking under two minutes to implement.

For a broader look at ChatGPT behavioral issues like this one, see the complete guide at AIQnAHub Troubleshoot.

What’s Actually Causing ChatGPT to Be So Wordy?

Before you fix anything, you need to understand why it happens — because the fix you choose depends on the context you’re in.

RLHF Training Rewarded “Helpfulness” Over Brevity

During ChatGPT’s fine-tuning process, human raters were asked to score model responses. Raters — being human — consistently gave higher scores to detailed, thorough-sounding answers. They interpreted more text as more effort, more care, more intelligence.

The model learned exactly the wrong lesson from that signal: more text = more helpful, regardless of what the user actually needed. This isn’t a glitch. It’s a deeply baked training bias. Every fix in this article works by overriding that default signal — either at the session level, the account level, or the API level. OpenAI Community Forum

Three Filler Patterns You’ll Recognize Immediately

Once you know what to look for, ChatGPT verbosity becomes obvious. The three main offenders I see in every workflow:

- Preamble bloat — “Great question! Let me break this down for you…” (zero informational value, 100% throat-clearing)

- Closing recap — the model restates what it just said in a final paragraph as if you weren’t paying attention

- Hedge stacking — “It’s worth noting that… however, it depends… that said…” injected into every paragraph regardless of whether nuance is warranted

The mistake I see most with power users is that they try to fix this with a one-time request mid-chat — “Please be more concise.” ChatGPT agrees, shortens the next response, then silently reverts three messages later. That’s not stubbornness; that’s context decay. Fix 2 solves it permanently.

How to Fix ChatGPT Too Verbose Not Direct Behavior (4 Methods)

These are ordered by effort — start at Fix 1 if you’re in a live chat right now, scale up if you want permanent response length control.

Fix 1 — In-Chat Command (Zero Setup, Works in 30 Seconds)

This is your immediate intervention. No settings to change, no account access required. I use this exact command when I’m mid-session and need to switch gears fast.

- Type this exactly in the chat window: “Cut verbosity by 50%. Be direct. No preamble, no recap.”

- For binary questions, prepend: “Answer in one sentence only.” — this stops the model from treating a yes/no question as an invitation to write an essay.

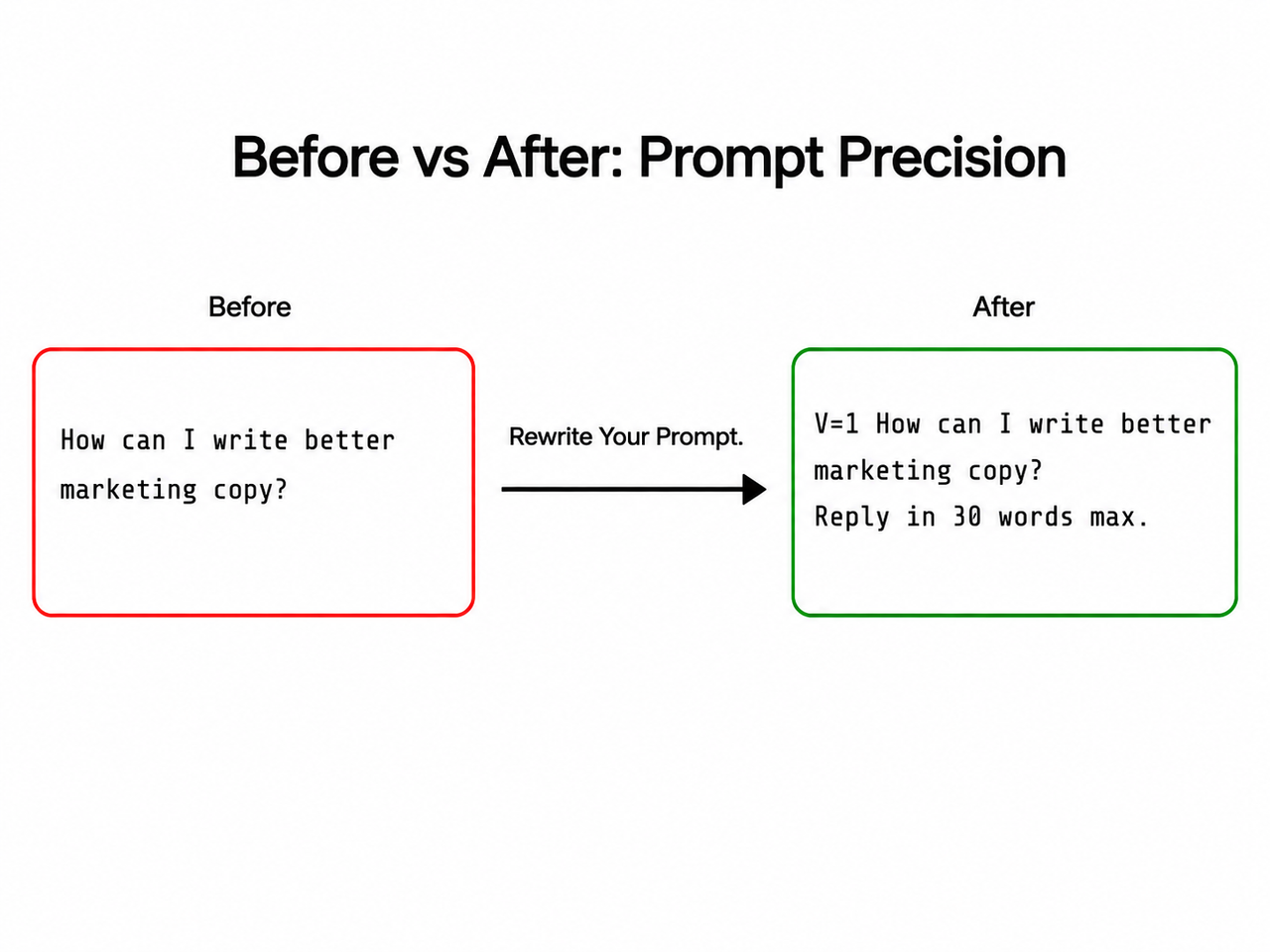

- Use the Verbosity Scale shorthand — prefix any prompt with

V=0throughV=5for granular direct answer prompting.

| Scale | Behavior |

|---|---|

V=0 | Absolute minimum — one line or one word |

V=1 | One to two sentences maximum |

V=3 | Balanced — normal working length |

V=5 | Full elaboration — let it run |

Example: V=0 What is the capital of France? → “Paris.”

This verbosity scale technique is documented in community testing and I’ve confirmed it works reliably on GPT-4o and GPT-5 across content, code, and data tasks. OpenAI Community Forum

✅ Best for: One-off fixes mid-session. No account access needed. Works in the free tier.

Fix 2 — Custom Instructions (Persistent Across All Chats)

This is the fix I recommend to every daily ChatGPT user. Two minutes of setup. Permanent result. You’ll never need to type a brevity reminder again.

- Navigate to: ChatGPT → Profile icon → Custom Instructions → “How would you like ChatGPT to respond?”

- Paste the following system prompt brevity block exactly and click Save.

Be direct and concise. Lead with the answer immediately.

Do not use preamble, intros, or filler phrases.

Do not repeat or recap what you already said.

Do not apologize or add disclaimers unprompted.

If I ask a yes/no question, answer yes or no first — then add one sentence maximum.

Minimize tokens at all times unless I explicitly ask for detail.This block is now active across every new conversation without exception. The model checks custom instructions ChatGPT before generating any response — these constraints are processed at the same priority level as a system prompt. OpenAI Community Forum — Custom Instructions

✅ Best for: Daily ChatGPT web app users who want zero-friction brevity from every session forward.

One important caveat from my testing: Custom Instructions apply to new chats only. Existing open conversations won’t pick them up mid-stream. If you’re in a long-running thread, use Fix 1 to reset behavior there, then let Fix 2 handle everything going forward.

Fix 3 — System Prompt Engineering (Developers & GPT Builder)

If you’re building on the API, operating a GPT Builder assistant, or running ChatGPT in a production automation, this is the fix you need. Concise prompt engineering at the system level is the only way to enforce brevity at scale across all users of your deployment.

- In OpenAI API (Chat Completions) or GPT Builder, open the System Prompt field.

- Add hard behavioral constraints at the top — above any persona or task instructions.

Respond in 3 sentences or fewer unless the user explicitly requests more.

Never use preamble. Lead with the answer.

Never add closing summaries or recap paragraphs.

Do not apologize. Do not hedge unless factually necessary.For GPT-5 API (Responses API) users, use the native verbosity parameter alongside max_output_tokens. The "verbosity" parameter is a structural API-level control — not a soft prompt instruction. OpenAI Help Center

{

"model": "gpt-5",

"input": "Explain caching in simple terms.",

"text": { "verbosity": "low" },

"max_output_tokens": 150

}Set max_output_tokens as a hard ceiling for all models. Use the reference table below for sane defaults. OpenAI Help Center

| Use Case | Recommended max_output_tokens |

|---|---|

| Quick factual answer | 100–150 tokens |

| Short summary or recap | 200–300 tokens |

| Structured report or analysis | 400–600 tokens |

| Long-form content generation | 1,000+ tokens (set intentionally) |

✅ Best for: Developers, automation builders, and anyone deploying ChatGPT to end users. Fewer output tokens = lower API billing.

Hard warning from experience: Don’t set max_output_tokens too aggressively without a corresponding system prompt instruction. A hard token limit settings cap will cut the model off mid-sentence if it runs out of budget. Pair a conservative token cap with: “Always complete your final sentence before stopping.”

Fix 4 — Prompt Formatting Discipline (Every Query, Every Time)

This is not a one-time fix — it’s a habit. Direct answer prompting is the highest-leverage skill any ChatGPT power user can develop, and it costs nothing except a few extra seconds of thinking before you hit Enter.

1. Specify the Exact Output Shape

- ❌ “Summarize this article.”

- ✅ “Summarize in exactly 3 bullet points, max 15 words each.”

2. Few-Shot Length Anchoring

Show ChatGPT what length you want by including a short example answer in your own prompt. The model pattern-matches format aggressively — this is one of the most reliable GPT output formatting techniques in practice.

Q: What is Docker?

A: Docker packages apps into containers for consistent deployment.

Now answer: What is Kubernetes?ChatGPT will match that response length almost exactly. I’ve used this technique to enforce one-liner answers across 20+ sequential queries in a single session.

3. The Bottom-Line Closer

Append “Give me the bottom line in 100 words or less” to any complex question. Works especially well with GPT-5 and the o-series reasoning models, which tend toward the most elaborate default outputs.

✅ Best for: Users who want per-query precision without global setting changes. Also the only fix that works across third-party ChatGPT wrappers and integrations.

Before vs. After: Prompt Precision Examples

Here are the exact comparisons I run when testing ChatGPT rambling fix techniques with new users. The differences are not subtle.

| Scenario | ❌ Verbose Prompt | ✅ Direct Prompt |

|---|---|---|

| Product pros/cons | “What are the pros and cons of Notion?” → 600-word essay | “V=1 List exactly 3 pros and 3 cons of Notion. Bullets only. No intro. No recap.” → 6 bullets, ~70 words |

| Quick fact lookup | “What is RAG in AI?” → 4-paragraph explainer | “Define RAG in AI in one sentence.” → 18-word definition |

| API integration | No max_output_tokens set → 400+ token response | "max_output_tokens": 150 + "verbosity": "low" → 80-token response |

| Code explanation | “Explain what this function does.” → full background context | “Explain this function in 2 sentences. Assume I know Python.” → 2 sentences |

The pattern is consistent: open-ended prompts invite open-ended answers. Constrained prompts produce constrained answers. ChatGPT is extremely literal when you give it a format — exploit that.

Frequently Asked Questions

Why does ChatGPT keep adding “Great question!” and long intros even when I hate them?

This is a residual RLHF artifact — human raters during training gave higher scores to responses that opened with affirmations and context-setting. You can permanently suppress it by adding “Never use preamble, affirmations, or compliments” to your Custom Instructions. It takes effect immediately on all new chats.

Does telling ChatGPT to “be concise” once actually stick for the whole conversation?

It sticks for a few turns — maybe three to five messages — then context decay kicks in and the model gradually reverts. ChatGPT does not carry behavioral instructions across separate chat windows at all. To make brevity persistent, use Custom Instructions (Fix 2). Those apply globally to every new conversation without re-prompting.

Will limiting max_output_tokens in the API cause ChatGPT to cut off mid-sentence?

Yes — if set too aggressively. A hard token limit settings cap truncates output at exactly that count regardless of sentence completion. The safer pattern is to combine a moderate max_output_tokens (200–300) with a system prompt instruction: “Always complete your final sentence before stopping.” OpenAI Help Center

Is ChatGPT-5 less verbose than GPT-4o by default?

Not inherently. GPT-5 is more capable of longer, more sophisticated responses. However, GPT-5 via the Responses API now supports the native "verbosity" parameter ("low" / "medium" / "high"), which is a meaningful structural improvement over GPT-4o where custom instructions ChatGPT and prompt-level constraints were the only lever. For everyday web app users, behavior differences remain minimal without explicit instructions.

What’s the single fastest fix if I’m in a live chat right now?

Type this and hit Enter:

From now on: answer directly, no preamble, no recap, max 3 sentences.ChatGPT will apply it immediately for the remainder of that session. For a permanent fix that survives across all future chats, update your Custom Instructions — it takes 90 seconds and you’ll never have to do it again.

Does the verbosity problem get worse with longer conversations?

Yes — and this is underappreciated. As a conversation grows longer, the model has more prior context suggesting elaborate responses are expected. This is called context drift. The fix is to type: “Reset tone: be direct, max 2 sentences per answer, no preamble” — this recalibrates the model’s output style for the remainder of the session.

Can I control verbosity differently for different topics in the same chat?

Yes — use inline V= scale tags per message. For quick lookups: V=0. For nuanced analysis: V=3. For full elaboration: V=5. This per-query concise prompt engineering approach gives you granular control without constantly overwriting your Custom Instructions or system prompt settings.

Ice Gan is an AI Tools Researcher and IT veteran with 33 years of hands-on systems and software experience. He runs AIQnAHub.com, where he tests and debunks AI tool behavior for knowledge workers and developers who need reliable, no-BS answers.

Leave a Reply