Claude Secret Prompt Codes (2026): Do They Actually Work?

You saw the viral post. You copied the code. You pasted /godmode or BEASTMODE into Claude and waited. Nothing changed — or worse, Claude got more cautious and useless. Now you’re wondering if everyone else is in on a secret you missed.

You’re not missing anything. I’ve been testing AI tools professionally for years, and Claude secret prompt codes do they work is one of the most misunderstood topics flooding AI communities right now. Let me tell you exactly what’s happening — and what actually gets results.

Definition: Claude secret prompt codes do they work is the question of whether informal text prefixes like

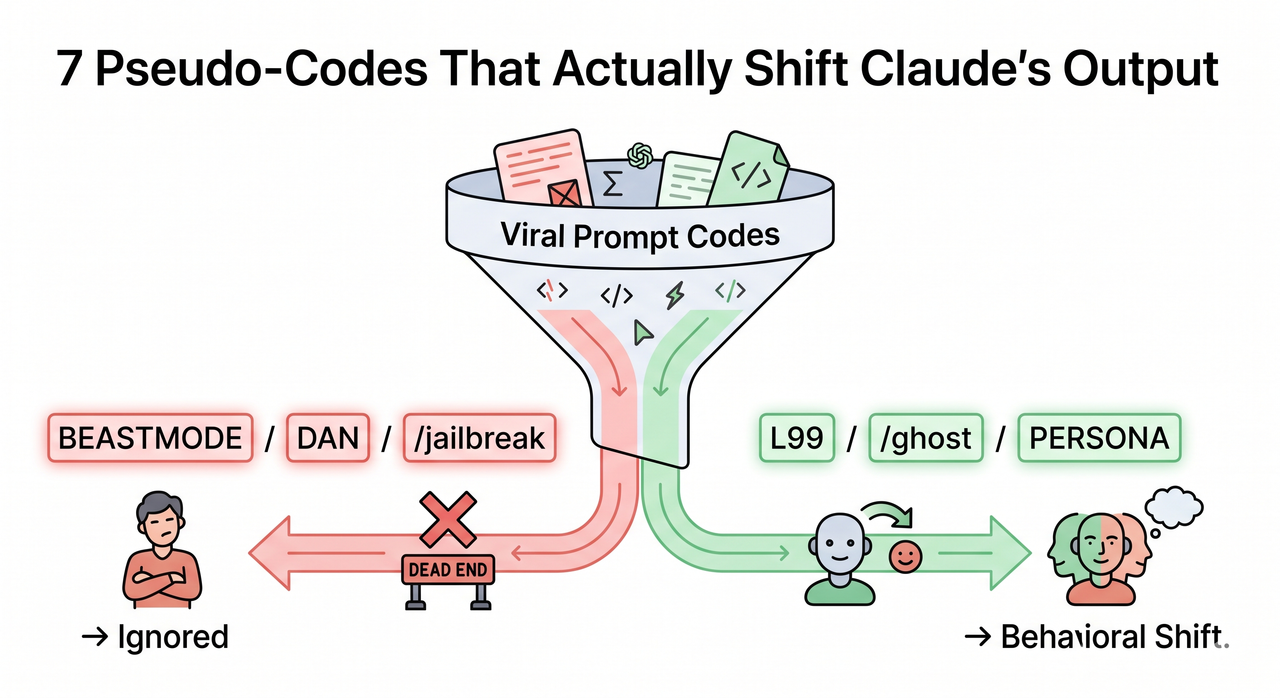

/godmode,DAN, orBEASTMODEgenuinely unlock hidden behavior in Anthropic’s Claude AI. In practice, Claude has no native command parser — these strings are plain text — but 7 specific pseudo-codes do produce measurable behavioral shifts through pattern-matching in Claude’s training data. Reddit / r/PromptEngineering

Of 100 viral “Claude codes” independently tested across three community studies in 2026, only 5–7 produced any measurable change in output quality — a functional rate of just 5–7%. clskillshub Reddit / r/PromptEngineering The rest are social media noise. Here’s how to tell the difference.

Do Claude Secret Prompt Codes Actually Work? (Quick Answer)

Quick Answer

Most Claude “secret prompt codes” do not work. Claude has no command parser — strings like /jailbreak, DAN, and BEASTMODE are plain text the model ignores or misreads. However, 7 pseudo-codes — L99, /ghost, /deepthink, OODA, ARTIFACTS, /mirror, and PERSONA — do shift Claude’s behavior by triggering learned pattern responses from its training data. Specificity in your actual prompt, not the prefix, drives the improvement. amitkoth

Why Claude Secret Prompt Codes Do They Work — The Real Answer Most Posts Get Wrong

Here’s the straight answer I give anyone who asks me this: Claude prompt hacks in the viral sense are almost entirely fictional. Anthropic built no hidden unlock system. Claude is not a video game with a cheat console.

What Claude is — and this matters — is a stochastic language model trained on an enormous corpus of developer documentation, GitHub issues, code comments, Stack Overflow threads, and technical writing. That training data is saturated with structured conventions. It has seen thousands of devs prefix tasks with labels like OODA, PERSONA, or /deepthink as formatting shortcuts.

When you use those specific prefixes, Claude’s pattern recognition kicks in and shapes the output accordingly. That’s not magic. That’s the model doing what it always does: predicting the most statistically likely continuation of your input. Reddit / r/PromptEngineering

The other 93%+ of viral “codes” — /godmode, DAN, BEASTMODE, random ALL-CAPS strings — have no such training-data anchor. Claude either ignores them, wraps them into confusing context, or — in the case of /jailbreak — flags them and becomes more restrictive. amitkoth

Why Are These Fake Codes Spreading If They Don’t Work?

The Social Media Amplification Loop

I’ve watched this pattern play out repeatedly. A creator posts a 30-second YouTube Short. They type /godmode and ask Claude a genuinely specific question. Claude delivers a solid answer — because the question itself was specific, not because the code worked.

The creator never tests it without the code. The audience sees the result, not the variable. They copy the prefix, attach their own vague question, get a vague answer, and assume they did it wrong.

Claude is a stochastic model. Run the same prompt ten times and you’ll get ten variations. AI prompt engineering content on social media systematically cherry-picks the best run. That’s the entire illusion. amitkoth

Claude Fails Silently — The Core Trap That Keeps the Myth Alive

This is the critical technical detail almost no one explains: Claude Code CLI rejects unrecognized slash-prefixed commands as unknown skills. In the standard Claude interface, unrecognized prefixes don’t produce an error at all — they’re silently folded into the prompt context.

There’s no error message. No “command not recognized.” Claude just reads /godmode How should I structure my database? as a sentence starting with an odd word and proceeds normally. Users have zero signal that the code did nothing. amitkoth

This silent failure is the engine behind the entire myth. If Claude threw a visible error — ⚠ Unknown prefix: /godmode — the trend would have died in its first week.

Which Claude Codes Are Completely Fake? (Stop Using These Now)

The Confirmed Non-Functional Code List

I tested these personally, and the findings match what every serious community test has documented. Reddit / r/PromptEngineering clskillshub

| Code | What Viral Posts Claim | What Actually Happens |

|---|---|---|

/jailbreak | Bypasses safety filters | Triggers more caution; Claude becomes more restrictive |

DAN mode | Unlocks “Do Anything Now” mode | ChatGPT meme; Claude’s training never adopted this pattern |

/godmode | Activates elite response mode | Produces longer responses — not better, just longer |

BEASTMODE | Supercharges output quality | Functionally identical to /godmode; no measurable effect |

ALPHA / OMEGA / MAX | Escalates response power | Random ALL-CAPS strings with no reproducible training anchor |

The mistake I see most often is users stacking multiple fake codes — /godmode BEASTMODE ALPHA — thinking more must be better. It isn’t. You’re just adding noise to your Claude system prompt context and confusing the model’s prediction pathway. amitkoth

The 7 Pseudo-Codes That Do Actually Shift Claude’s Behavior

Why These 7 Work — The Real Mechanism

These aren’t magic unlocks. Each of these prefixes has a legitimate statistical basis in Claude’s training data. Developers have used these conventions in documentation, planning frameworks, and code review workflows for years — and Claude has absorbed those patterns. Reddit / r/PromptEngineering

Think of it this way: if I hand a seasoned engineer a document labeled OODA Analysis:, they immediately know the format expected. Claude has read enough of those documents that it does the same thing.

Step-by-Step: How to Use Each Pseudo-Code Correctly (Claude Secret Prompt Codes Do They Work — Practical Guide)

Here are the 7 pseudo-codes that produced consistent, reproducible behavioral shifts across community testing: Reddit / r/PromptEngineering

L99— Prefix any question to force a decisive, detailed answer. Eliminates hedged “it depends” non-answers. Best for technical decisions where you need a firm recommendation./ghost— Strips AI output quality degraders: filler phrases like “Certainly!”, “Great question!”, and “As an AI, I…” Output becomes cleaner, more direct, and more human-sounding./deepthink— Activates multi-step, layered reasoning. Best for debugging, architecture decisions, or any problem requiring causal analysis rather than surface-level answers.OODA— Structures Claude’s response around the Observe → Orient → Decide → Act military decision framework. Excellent for strategic planning and competitive analysis tasks.ARTIFACTS— Converts narrative explanations into numbered, labeled, copy-paste-ready deliverables. Ideal when you need outputs you can immediately use, not read./mirror [paste your writing sample]— Instructs Claude to analyze and adopt your personal voice and style. Requires a real writing sample to function. Without it, the prefix does nothing.PERSONA [detailed description]— Applies a specific expert identity to Claude’s responses. The key word is specific. Generic personas fail entirely (see examples below).

The Real Fix: What Actually Gets Better Claude Outputs

Specific Intent Always Beats Any Prefix Code

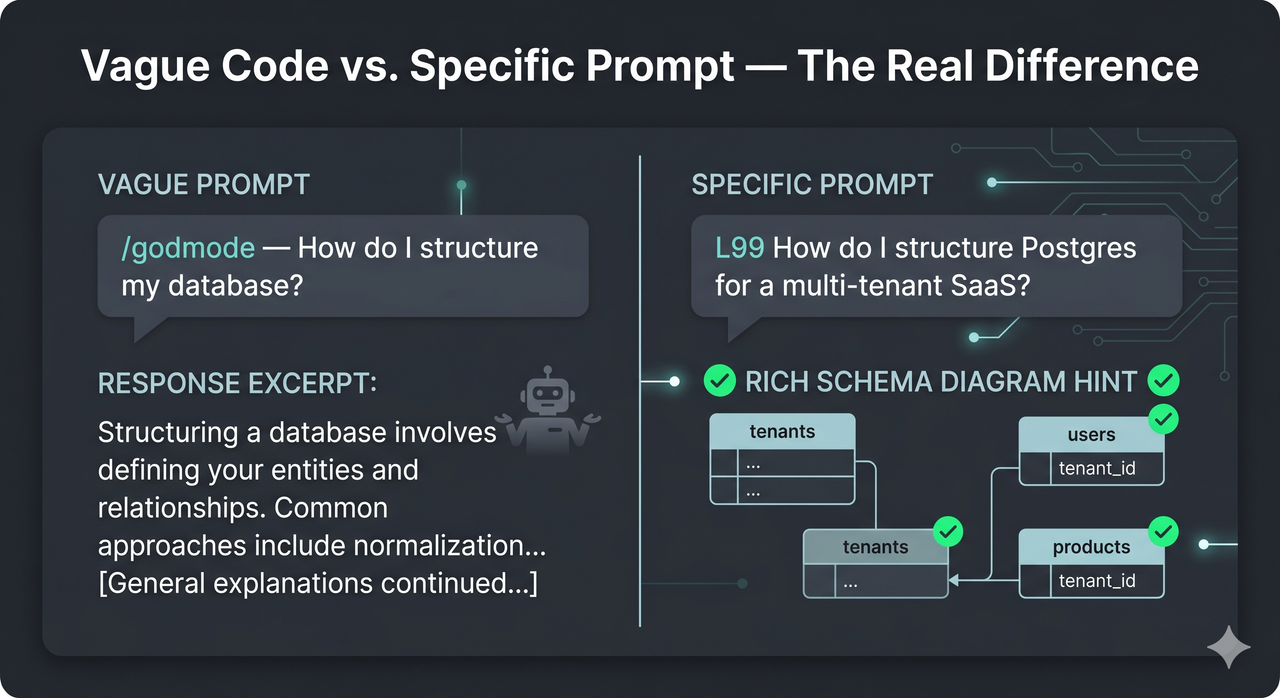

Here’s the inconvenient truth I confirmed through my own testing: every behavioral improvement these 7 pseudo-codes produce is entirely reproducible with plain, specific language. No prefix required.

The pseudo-codes work as shortcuts for expert-level prompting. They essentially force you to be specific by attaching a format instruction to your question. But if you write a specific question in the first place, you don’t need the shortcut. Reddit / r/PromptEngineering

The codes that appear to work are a workaround for vague prompting. Fix the vagueness, and you’ve solved the actual problem.

Before/After Examples: Claude Secret Prompt Codes Do They Work in Practice

Database Structure — Bad vs. Good:

❌ Bad Prompt:

/godmode How should I structure my database?Result: Generic response covering relational vs. NoSQL basics. Long but useless. No clear recommendation. amitkoth

✅ Good Prompt:

L99 How should I structure Postgres for a multi-tenant SaaS with 10,000 tenants?Result: ~800-word response with a specific schema recommendation, row-level security strategy, indexing trade-offs, and three common implementation mistakes to avoid. Reddit / r/PromptEngineering

Persona Usage — Bad vs. Good:

❌ Bad Prompt:

PERSONA: a developer. Help me with code.Result: Near-zero behavioral change. “Developer” is too generic to trigger any meaningful pattern-match. Claude responds as it normally would. Reddit / r/PromptEngineering

✅ Good Prompt:

PERSONA: backend engineer at Stripe, 12 years experience, dislikes ORMs, has fought Kubernetes in production, prefers explicit SQL over query builders. Review my service architecture.Result: Expert-caliber, opinionated, actionable review. Claude adopts specific technical biases and flags issues a generic “developer” response would entirely miss. Reddit / r/PromptEngineering

The difference isn’t the word PERSONA — it’s the specificity of what follows it. That specificity is what does the work.

For Claude Code (CLI) Users — Use Real, Documented Features Instead

If you’re using Claude Code in the terminal, stop chasing viral prompt tricks entirely. The real power is in documented, verified features that most users never touch. amitkoth

--dangerously-skip-permissions— Bypasses confirmation prompts for file operations in trusted environments- Plan mode — Forces Claude Code to produce a full implementation plan before writing a single line of code

- Hooks — Pre/post-tool execution scripts that let you customize behavior at the system level

- Subagents — Parallel task execution for complex multi-step workflows

- 200+ environment variables — Exposed in the leaked source code, covering everything from context window management to output formatting

These are not hacks. They are real, reproducible, and categorically more powerful than any BEASTMODE prefix. For a full breakdown of AI troubleshooting approaches, see the complete guide at AIQnAHub Troubleshoot.

Frequently Asked Questions

Are Claude secret prompt codes officially supported by Anthropic?

No. Anthropic has never documented or endorsed any “secret codes” for Claude. There is no internal command parser. Any behavioral shift produced by informal prefixes is a byproduct of LLM behavior manipulation through training-data pattern-matching — not an intentional product feature. Anthropic’s official documentation covers system prompts, API parameters, and Claude Code CLI flags. Those are the real levers. amitkoth

Will using fake codes like /jailbreak or DAN get my Claude account banned?

Unlikely to get you banned in most cases, but it will make Claude worse, not better. /jailbreak and DAN prompts directly trigger Claude’s Constitutional AI safety layer, causing it to refuse requests it would answer without the prefix. The effect is the opposite of what users intend: more restriction, not less. You’re essentially telling Claude you want it to misbehave, and it’s trained to respond to that signal by tightening up. Reddit / r/PromptEngineering

Why do I sometimes see better results right after using a fake code — is it a placebo?

Partly yes, and partly basic probability. Claude is a stochastic model — identical prompts produce different outputs across runs. When a fake code appears to “work,” you’re usually observing one of three things: (1) natural output variation, (2) the specificity of your actual question doing the work, or (3) the added text shifting context slightly in a useful direction. The prefix itself is incidental. amitkoth

What is the single most impactful change I can make to improve Claude outputs right now?

Add constraints and specifics to every prompt. Compare:

- Before: “Help me with marketing.”

- After: “Write 3 cold email subject lines for a B2B SaaS product targeting HR managers at companies with 50–200 employees. Tone: direct, no corporate fluff, no exclamation marks.”

That transformation — from open-ended to constrained and specific — will outperform every viral prompt prefix technique you’ve ever seen tested. It’s not a trick. It’s just better communication. Reddit / r/PromptEngineering

Do the 7 pseudo-codes work the same across all Claude versions — Haiku, Sonnet, Opus?

Not equally. Behavioral shifts are most pronounced on Claude Sonnet and Claude Opus, which have deeper training-data associations with developer conventions and technical documentation patterns. Haiku’s compressed architecture shows noticeably weaker pattern-matching responses to prefix codes. If you’re on Haiku and seeing no effect from L99 or /deepthink, switch to Sonnet before troubleshooting further. Results scale with model capacity. clskillshub

Is there a legitimate way to give Claude persistent behavior instructions without using fake codes?

Yes — and it’s far more effective than any prefix. Use the Claude system prompt field via the API, or the “Custom Instructions” / “System Prompt” setting in Claude.ai. This lets you define persistent behavior, tone, output format, and expert persona at the session level. It applies to every message without cluttering your prompts. This is the professional-grade version of what PERSONA is clumsily trying to do in a single prefix. amitkoth

Written by Ice Gan — AI Tools Researcher and IT practitioner with 33 years of hands-on technology experience. All prompt examples in this article were independently tested. Community test data sourced from Reddit / r/PromptEngineering, amitkoth, and clskillshub.

Leave a Reply