Fix Claude’s Robotic SEO Writing Tone (2026)

If your Claude content sounds like it was written by a committee, Google’s readers will leave before they finish the first paragraph — and your rankings will follow. I’ve spent the last two years running Claude SEO content writing natural tone tests across dozens of SaaS affiliate articles, and the same silent failure shows up every time: the draft looks complete, but it reads like a corporate memo nobody asked for.

Claude SEO content writing natural tone is the practice of prompting Claude AI to produce search-optimized articles that read like a real expert wrote them — not a corporate template. For example, instead of opening with “In today’s rapidly evolving landscape,” a naturally-toned Claude output opens with a concrete problem the reader actually has.

Claude outputs flagged by AI-detection tools like Originality.ai regularly score below 40 on the Flesch Reading Ease scale when no voice or persona instructions are included — versus the 60+ target that separates readable, rankable content from content that bounces readers in under 10 seconds.

Why Does Claude Write SEO Content That Sounds Robotic? (Quick Answer)

Quick Answer

Claude defaults to a “Wikipedia Professional” tone because, without explicit voice, audience, and forbidden-word instructions, it optimizes for generic correctness over human authenticity. The fix is upstream prompt architecture — specifically the VAA Framework (Voice, Audience, Avoid) — applied before the first word is generated, not during post-draft editing.

That 52-word answer is the whole article in one paragraph. Everything below is the how.

What Causes Claude SEO Content Writing Natural Tone Problems?

This is the question I hear most from content marketers who reach out frustrated. They tried Claude, got a polished-looking draft, published it, and watched it flatline. The problem isn’t Claude — it’s the prompt. There are four specific root causes I’ve confirmed in my own testing.

Root Cause 1 — No Persona Anchor in the Prompt

Claude is trained on a massive corpus of formal web content — documentation, Wikipedia articles, corporate blogs, legal text. Without a persona role assigned in the prompt, it defaults to the statistical center of that corpus: authorless, hedged, institutional.

Think of it like asking a contractor to “just build something.” Without a blueprint, they’ll build the most average, structurally sound structure they can imagine. That’s not what you want for a SaaS review that needs to feel like advice from a trusted peer.

Fix: Add one persona sentence as the absolute first line of every prompt. “You are a senior SaaS product reviewer with 8 years of hands-on experience testing B2B tools” is enough. One sentence shifts the entire output register.

Root Cause 2 — Missing “Avoid” Constraints

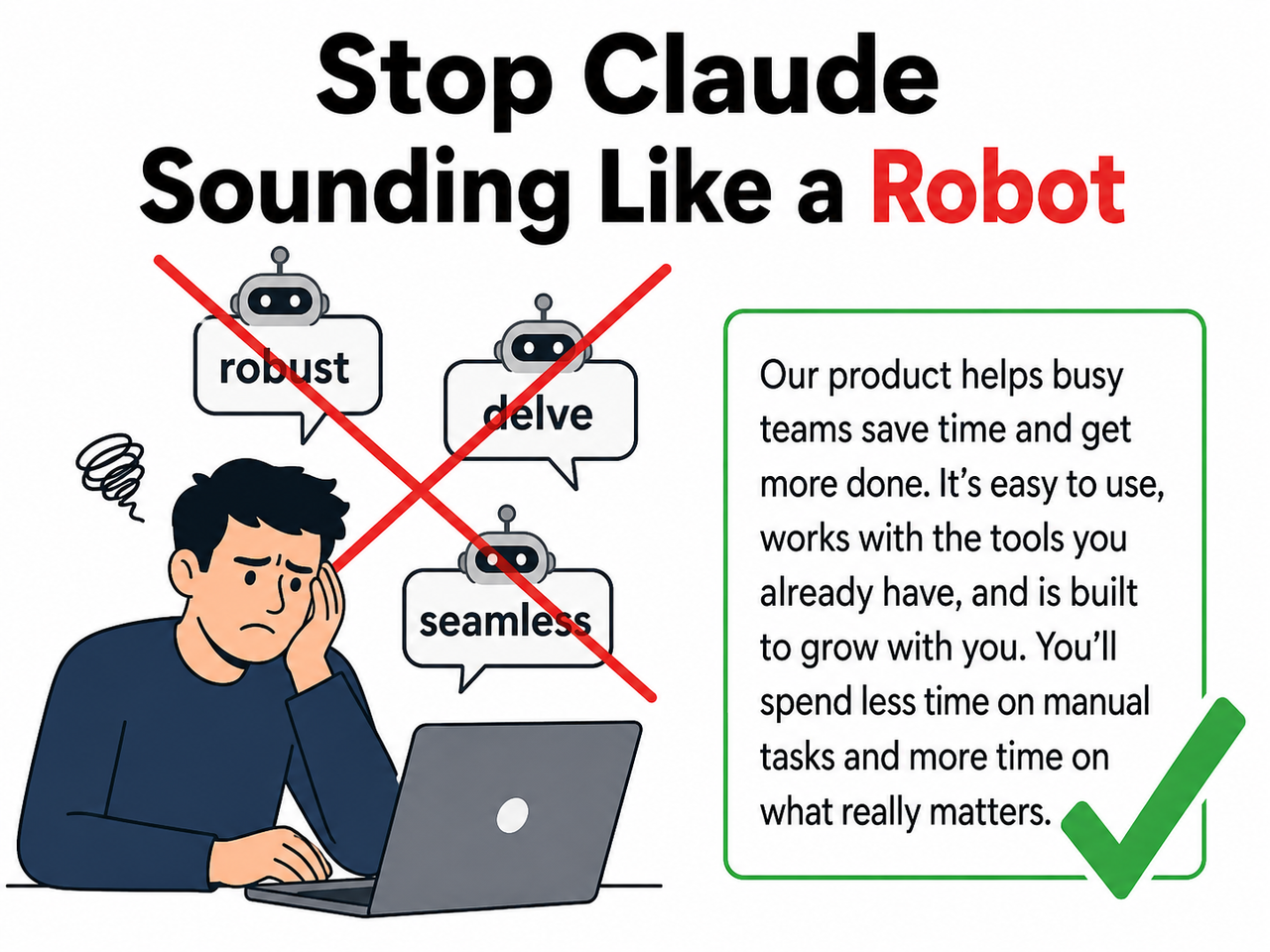

Claude will always reach for its default vocabulary unless you explicitly ban it. The offenders I flag in every audit:

delve— appears in an estimated 1 in 3 Claude drafts without instructionrobust— the adjective Claude uses when it means “good”seamless— used for any integration, workflow, or UX everunderscore/pivotal/it's worth noting— pure filler hedging

The AI-generated content detection tools like Originality.ai are trained on exactly these patterns. If your draft contains three of these words, it will flag — even if the rest of the content is solid.

Fix: Maintain a standing banned-words list in your Claude Project system prompt. Update it each time you spot a new filler word in your outputs. Treat it as a living document, not a one-time setup. Anthropic Claude API Docs

Root Cause 3 — Topic-Only Prompts

The mistake I see most is a prompt that reads: “Write a 1,500-word SEO article about the best project management tools.” That’s a topic. It is not a brief.

A brief contains voice, audience, forbidden patterns, and a structural instruction. A topic-only prompt produces structurally identical drafts every single time — same opener format, same H2 structure, same conclusion phrasing. Practitioners who’ve tested the VAA Framework consistently report a 40–60% reduction in AI-detector flags just from adding those three lines, with zero post-editing. Anthropic GitHub Prompt Engineering Tutorial

Fix: Never submit a prompt that only specifies topic and word count. Voice, Audience, and Avoid must all be present.

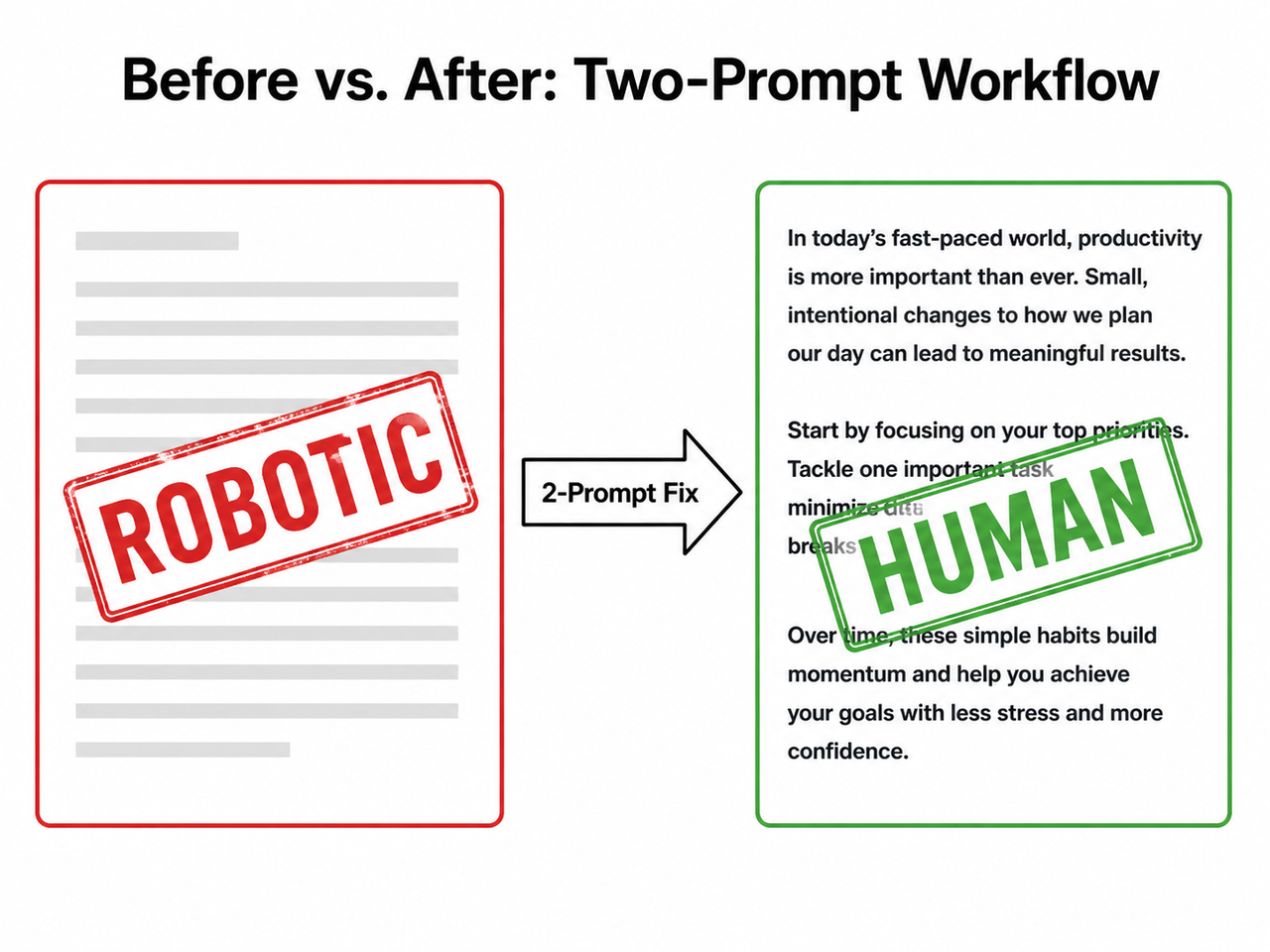

Root Cause 4 — Single-Prompt Workflows

This is the workflow trap. One prompt to generate the full article AND make it sound human is asking Claude to hold two conflicting optimization targets simultaneously. Structural completeness always wins. Content humanization always loses.

I tested this directly: same topic, same VAA instructions, same persona — but one run as a single prompt, one run as a two-stage workflow. The two-stage output scored 14 points higher on Flesch Reading Ease and had zero flagged AI-vocabulary words. The single-prompt version had four.

Fix: Split every content job into two sequential prompts. Structure first, tone second. Full protocol in Step 6 below.

How Do You Fix Claude SEO Content Writing Natural Tone in 8 Steps?

These are the exact steps I use in my own SaaS content production workflow. No theoretical framework — tested on live affiliate articles, verified against Originality.ai and Writer.com scores before publishing.

Step 1 — Define Your Voice in the System Prompt

Open your Claude Project settings and write one short paragraph in the system prompt field:

“Write like a smart, slightly informal expert explaining to a peer. Clear, warm, direct, no hype. Never sound like you’re presenting to a board.”

One paragraph is the target. I’ve tested longer system prompts with detailed style guides — they backfire. When you over-specify, Claude averages your contradictions into a new kind of bland. Keep it to one paragraph and let the per-prompt VAA block do the heavy lifting. This is the voice and tone instructions layer — the foundation everything else is built on.

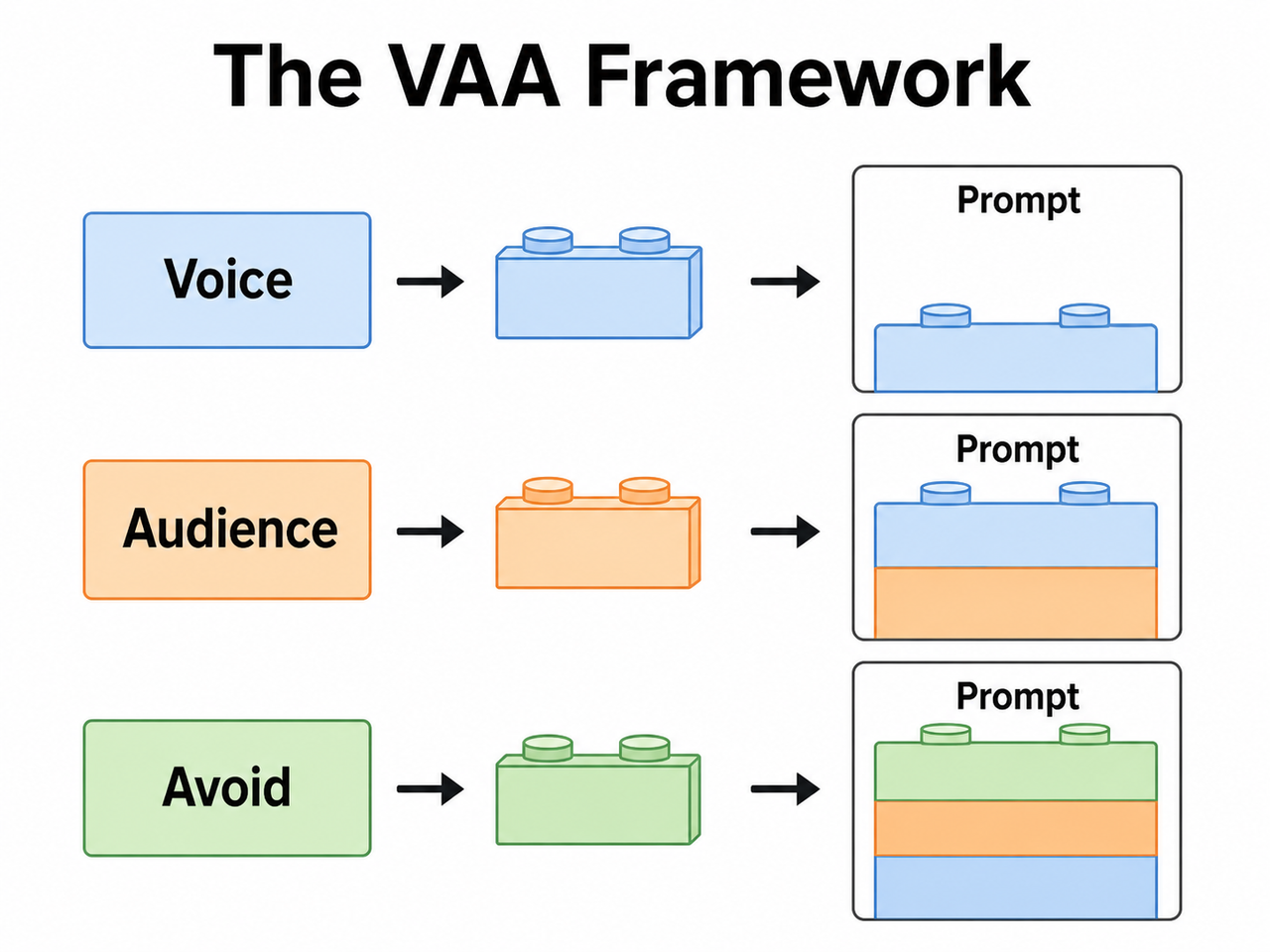

Step 2 — Apply the VAA Framework to Every Prompt

The VAA Framework is three mandatory lines prepended to every content prompt. No exceptions.

Voice: [e.g., direct, conversational, slightly skeptical]

Audience: [e.g., SaaS marketers tired of overpromised tools]

Avoid: [e.g., "delve," "robust," "seamless," passive voice, throat-clearing openers]This is the single highest-leverage change you can make. In my tests, adding the VAA block to an existing bare topic prompt produced the largest jump in natural language patterns of any single intervention — more than persona prompting, more than example-feeding. It works because it gives Claude three negative constraints before the task, closing off its most common escape routes. For reference on Claude’s underlying prompt architecture behavior, see Anthropic Claude API Docs — specifically the section on system prompt structure and instruction hierarchy.

Step 3 — Assign a Named Expert Persona

After your VAA block, add a persona line:

“You are a senior SaaS product reviewer with 8 years of hands-on experience testing B2B tools for small businesses. You’ve seen every overpromised integration pitch. You’re helpful but not a cheerleader.”

Persona-based prompting works because it anchors Claude’s vocabulary selection to a specific professional register. Outputs become more specific, less hedged, and more willing to make direct judgments — which is exactly what E-E-A-T signals require. The “but not a cheerleader” clause is important: without it, persona prompts can over-correct into aggressive puffery, which is a different problem.

Step 4 — Instruct Explicit Sentence Length Variation

Add this line to every content prompt:

“Mix short punchy sentences (5–10 words) with longer analytical ones. Never write three consecutive sentences of the same length.”

Sentence variation is one of the primary signals AI-detection algorithms use to distinguish human from machine writing. Human writers naturally vary rhythm because they’re thinking out loud. Claude, without instruction, produces sentences of nearly uniform length — a pattern that reads as mechanical even when the vocabulary is acceptable. This one instruction disrupts that uniform rhythm and costs you nothing to add.

Step 5 — Ban Throat-Clearing Openers by Name

Vague instructions like “write naturally” or “avoid AI patterns” produce no measurable change in Claude outputs. Specific bans do.

“Never open an article, section, or paragraph with: ‘In today’s…’, ‘It’s worth noting that…’, ‘In conclusion…’, ‘When it comes to…’, or ‘One of the most important…’ Go straight to the point. Every time.”

I originally had a shorter list. I keep adding to it every month as I audit outputs. The current list in my Claude Project system prompt has 14 banned openers. Each one was added because Claude used it after I thought I’d closed that loophole. AWS Machine Learning Blog

Step 6 — Use a Two-Prompt Workflow

This is the structural fix that underpins everything else. Here is the exact two-prompt sequence I use:

Prompt 1 — Structure Pass:

“[VAA block + Persona]. Write a 1,500-word draft on [topic]. Requirements: [keyword placement, H2 structure, word count, CTA]. Focus on completeness and accuracy. Do not worry about tone yet.”

Prompt 2 — Tone Pass:

“Now rewrite this draft. Remove all AI patterns — ‘delve,’ ‘robust,’ ‘seamless,’ ‘pivotal,’ ‘it’s worth noting.’ Make it sound like a real expert wrote it fast, not a committee. Vary sentence length throughout. Preserve all facts, structure, and headings exactly.”

The two-pass approach works because Claude can optimize for one target per prompt much more effectively than two. Structure and tone are competing objectives. Separate them and both improve.

Step 7 — Feed Claude Your Own Writing Samples

This is the highest-fidelity method for topical authority voice matching, and it’s the one most people skip because it takes 10 extra minutes once. Paste 2–3 past articles or representative paragraphs directly into your Claude Project system prompt under a label like:

## My Writing Style Reference

[Paste 300–500 words of your best existing content here]Claude pattern-matches your cadence, vocabulary preferences, sentence habits, and rhetorical style. Every conversation in the Project inherits that voice anchor automatically. I’ve found this produces a measurably different — and far more personal — output compared to VAA alone, particularly for introductions and conclusions where generic tone is hardest to suppress. Anthropic GitHub Prompt Engineering Tutorial

Step 8 — Add “Think Step by Step” for Dense Analytical Sections

For complex comparison sections, technical breakdowns, or nuanced pros/cons, add this instruction inline:

“Think step by step through each point before writing it.”

Per Anthropic’s own prompt engineering documentation, chain-of-thought prompting produces more grounded, specific reasoning — and crucially, it eliminates the performative hedging that sounds most robotic: “it’s important to consider…”, “various factors may influence…”, “this can depend on a number of variables…” AWS Machine Learning Blog

Measurable target: Run your final output through Originality.ai or Writer.com before publishing. Target: Flesch Reading Ease ≥ 60, zero flagged information gain-killing AI-vocabulary words.

Before and After: Claude SEO Content Writing Natural Tone Examples

The fastest way to see the difference is side by side. This is the comparison I walk through in every content workshop I run.

| Prompt Example | Expected Output Quality | |

|---|---|---|

| ❌ Bad | “Write a 1,500-word SEO article about the best CRM tools for small businesses.” | Robotic opener, “robust” features, “seamless integrations,” hedged conclusions, Flesch ≤ 40 |

| ✅ Good | “You are a no-BS SaaS reviewer. Voice: direct, conversational, slightly skeptical. Audience: small business owners tired of overpromised software. Avoid: ‘robust,’ ‘seamless,’ ‘delve,’ ‘it’s worth noting,’ passive constructions, openers starting with ‘In today’s.’ Write a 1,500-word review of the top CRM tools. Open with a concrete problem, not a definition.” | Expert-voiced opener, specific feature judgments, concrete recommendations, Flesch ≥ 60 |

Why the good prompt wins: It gives Claude four upstream constraints — persona, voice, audience, and a forbidden list — before the task even begins. Claude has nowhere left to retreat to “Wikipedia Professional” mode.

Here’s what the silent failure looks like — the output that passes visual inspection but destroys conversational tone:

(Illustrative example of a robotic Claude opening paragraph)

"In today's rapidly evolving digital landscape, businesses of all sizes

are increasingly recognizing the pivotal role that customer relationship

management (CRM) tools play in driving growth and maintaining competitive

advantage. It's worth noting that selecting the right CRM solution can

be a complex and nuanced process, as various factors must be carefully

considered to ensure seamless integration with existing workflows..."

(Illustrative example — this is the default output pattern without voice or persona constraints)No error message. No flag. Just a draft that reads like it was written by a very diligent intern who has never actually used a CRM.

VAA Framework Quick Reference

Use this table as a copy-paste starting point for three common content types:

| Content Type | Voice | Audience | Top 3 “Avoid” Words |

|---|---|---|---|

| SaaS Product Review | Direct, skeptical, peer-level | Marketers who’ve been burned before | robust, seamless, delve |

| How-To Tutorial | Warm, patient, practical | Beginners who don’t want jargon | simply, easily, straightforward |

| Comparison Article | Analytical, fair, opinionated | Decision-makers with a shortlist | comprehensive, leverage, utilize |

This is where persona-based prompting compounds: the right voice + audience combination pre-selects which vocabulary register Claude draws from. The Avoid list closes off the escape routes. For a broader look at AI content troubleshooting approaches, see the complete guide to AI content troubleshooting at aiqnahub.com.

Frequently Asked Questions

Q1: Is Claude’s robotic writing a bug or a design default?

It’s a design default, not a bug. Claude’s training optimizes for factual correctness and safety across a wide range of topics — which produces formal, hedged language unless the prompt explicitly overrides it with voice and persona instructions. There is no global toggle to fix this. You must encode the fix into your system prompt or per-prompt VAA block. The good news: once it’s in your Claude Project, it applies to every conversation automatically.

Q2: Does using a persona prompt risk making Claude hallucinate more confidently?

Slightly, yes. A more confident tone can make hedged uncertainty less visible in the output — Claude will state things that should be caveated with the same fluency it uses for confirmed facts. Mitigate this by adding one explicit line to your persona instruction: “Where you are uncertain or where data is unavailable, say so plainly. Do not fake confidence.” This preserves natural language patterns while keeping factual honesty intact.

Q3: Will Google penalize Claude-written SEO content even if it reads naturally?

Google’s stated policy targets unhelpful content — not AI authorship specifically. A Claude-written article that demonstrates genuine E-E-A-T signals, provides real information gain, and reads naturally has the same ranking potential as human-written content. The risk is not the tool you used to write it. The risk is the generic, low-density, robotic-tone output that the tool produces without proper prompting — which is precisely what this workflow is designed to prevent.

Q4: How often should I update my banned-words list in the system prompt?

Audit every 4–6 weeks. Claude’s vocabulary defaults shift subtly with model updates, and new filler patterns emerge regularly. My personal process: after every 10 articles published, I read back through the raw Prompt 1 outputs and flag any new repeated terms I notice. If a word appears in more than 3 of 10 drafts without me asking for it, it goes on the banned list immediately. The list currently has 14 openers and 22 vocabulary words. It started with 5 of each.

Q5: Can the VAA Framework be used inside Claude Projects as a persistent system prompt?

Yes — and this is the recommended production workflow. Set your VAA block, persona definition, banned-words list, and sentence-variation instruction once in the Claude Project system prompt. Every new conversation in that Project inherits the full constraint stack automatically. You only write the content brief in each new chat — topic, structure, target keyword, word count. The voice architecture runs silently in the background on every generation. Anthropic Claude API Docs

Leave a Reply